10 Best AnythingLLM Alternatives for Enterprise Document AI (2026)

Compare 10 AnythingLLM alternatives for enterprise document AI. Covers PrivateGPT, Danswer, Dify, OpenWebUI, and self-hosted options with compliance features.

AnythingLLM alternatives matter when you hit the platform's limits: collaboration features that don't scale, compute ceilings that return 502 errors under heavy embedding loads, and cloud pricing that stays opaque. The tool works for solo developers chatting with PDFs. Enterprise teams need more.

This guide covers 10 document AI platforms that handle what AnythingLLM can't. We evaluated each on connector ecosystem, self-hosting options, multi-user access controls, and production stability.

What Makes AnythingLLM Work (And Where It Falls Short)

AnythingLLM is a desktop and self-hosted application for chatting with documents using local or cloud LLMs. Upload PDFs, Word docs, or markdown files. The system embeds them into a vector database and retrieves relevant chunks when you ask questions.

What it does well:

- Single-user document chat with local models (Ollama, LM Studio)

- Agent capabilities with custom tools

- Multi-modal support for images and audio

- Free for self-hosted deployments

Where enterprises struggle:

- Cloud compute limits throttle heavy embedding jobs

- Limited collaboration features compared to team-focused platforms

- Requires server management skills for self-hosting

- No native connectors to enterprise tools (Slack, Salesforce, Google Drive)

- Documentation lacks pricing clarity for cloud tiers

If you need private document chat for personal use, AnythingLLM handles it. For team deployments with compliance requirements and integration needs, consider these alternatives.

Quick Comparison Table

| Platform | Best For | Self-Hosted | Enterprise Connectors | License |

|---|---|---|---|---|

| Prem AI | Enterprise fine-tuning | Yes | API-based | Commercial |

| PrivateGPT | Air-gapped deployments | Yes | No | Apache 2.0 |

| Danswer/Onyx | Enterprise search | Yes | 40+ native | MIT |

| Dify | Visual workflow building | Yes | API-based | Apache 2.0 |

| OpenWebUI | Ollama users | Yes | Web search | MIT |

| LibreChat | Multi-provider teams | Yes | OAuth, search plugins | MIT |

| Khoj | Personal knowledge mgmt | Yes | Notion, Obsidian | AGPL |

| Quivr | Custom RAG pipelines | Yes | Custom parsers | Apache 2.0 |

| Flowise | Low-code builders | Yes | 100+ nodes | Apache 2.0 |

| LocalGPT | Offline-first privacy | Yes | No | Apache 2.0 |

1. Prem AI

Best AnythingLLM alternative for enterprise fine-tuning and compliance

Prem AI addresses what other document AI platforms miss: custom model training with enterprise compliance. While alternatives like PrivateGPT and LocalGPT provide inference, Prem AI lets you fine-tune models on your documents to achieve better retrieval and generation quality.

Why fine-tuning matters for document AI:

RAG retrieval quality depends on embedding models understanding your domain. Generic embeddings miss industry terminology, acronyms, and context. Fine-tuning on your actual documents produces embeddings that retrieve more relevant chunks.

Similarly, generation quality improves when the LLM understands your document style, terminology, and expected output formats. A fine-tuned model answering questions about legal contracts outperforms a generic model, even with perfect retrieval.

Platform capabilities:

- Dataset automation: Upload documents, and the platform handles parsing, chunking, and training data preparation. Automated dataset generation from raw documents.

- Fine-tuning: Train custom embedding and generation models. 30+ base models including Llama, Mistral, Qwen. LoRA and full fine-tuning options.

- Evaluation: Test retrieval quality and answer accuracy before deployment. LLM-as-a-judge scoring.

- Deployment: Self-hosted, AWS VPC, or air-gapped options.

Compliance certifications:

- SOC 2 Type II

- HIPAA compliant with BAA

- GDPR compliant

- Swiss jurisdiction under FADP

How it compares to AnythingLLM:

| Feature | AnythingLLM | Prem AI |

|---|---|---|

| Document chat | Yes | Yes |

| Fine-tuning | No | Yes (30+ models) |

| Custom embeddings | No | Yes |

| Compliance certs | No | SOC 2, HIPAA, GDPR |

| Multi-user RBAC | Limited | Yes |

| Enterprise support | Community | Included |

When to choose Prem AI:

- Teams needing custom models trained on proprietary documents

- Regulated industries requiring compliance certifications

- Organizations wanting fine-tuned retrieval without ML infrastructure

- Enterprises needing production support and SLAs

2. PrivateGPT

Best AnythingLLM alternative for air-gapped environments

PrivateGPT runs entirely offline. No API calls leave your machine. For organizations handling classified documents or operating in regulated industries, this architecture eliminates data leakage concerns at the network level.

Technical architecture:

- Built on LlamaIndex for document parsing and retrieval

- Supports PDF, DOCX, TXT, CSV, and markdown

- Runs with Ollama, llama.cpp, or HuggingFace models

- Uses Qdrant or ChromaDB for vector storage

Enterprise considerations: The trade-off for complete privacy is setup complexity. PrivateGPT requires manual model configuration and doesn't include pre-built integrations. Teams comfortable with Python deployments will find the learning curve manageable. Those expecting plug-and-play functionality should look elsewhere.

When to choose PrivateGPT:

- Air-gapped or GDPR-compliant deployments requiring zero external calls

- Defense, healthcare, or legal environments with strict data residency

- Teams with Python expertise who want full control over RAG pipeline

3. Danswer (Onyx)

Best AnythingLLM alternative for enterprise workplace search

Danswer (rebranded to Onyx) connects to where your documents already live: Slack, Google Drive, Confluence, Notion, Salesforce, and 40+ other enterprise tools. Instead of uploading files manually, Danswer indexes your existing knowledge base and makes it searchable with natural language.

Connector ecosystem:

- Collaboration: Slack, Microsoft Teams, Discord

- Storage: Google Drive, OneDrive, SharePoint, Dropbox

- Documentation: Confluence, Notion, Gitbook, ReadMe

- CRM: Salesforce, HubSpot, Zendesk

- Code: GitHub, GitLab, Bitbucket

Architecture:

- PostgreSQL with pgvector for storage

- Supports OpenAI, Anthropic, Azure, or self-hosted models

- Role-based access control synced from source systems

- MIT license allows commercial use without restrictions

When to choose Danswer:

- Teams with documents scattered across SaaS tools

- Organizations wanting unified search without migrating content

- Enterprises requiring access control inheritance from existing systems

This is the strongest option if your bottleneck is connector coverage rather than raw document chat.

4. Dify

Best AnythingLLM alternative for visual workflow building

Dify provides a drag-and-drop interface for building RAG applications without writing orchestration code. Where AnythingLLM offers document chat, Dify offers document chat plus workflow automation, agent building, and API deployment.

Platform capabilities:

- Visual canvas for designing RAG pipelines

- Built-in dataset management with chunking controls

- Agent mode with tool calling and web search

- One-click API deployment for production

- Multi-tenant workspaces with RBAC

RAG features:

- Hybrid search (semantic + keyword)

- Reranking with configurable models

- Citation and source attribution

- Support for 10+ file formats

Deployment options: Dify offers both cloud and self-hosted deployment. The self-hosted version runs via Docker Compose and supports any OpenAI-compatible model endpoint.

When to choose Dify:

- Teams wanting visual RAG pipeline design

- Organizations building customer-facing document chat products

- Developers needing API endpoints without custom backend code

5. OpenWebUI

Best for Ollama users

OpenWebUI started as a frontend for Ollama and expanded into a full document AI platform. If you're already running local models with Ollama, OpenWebUI adds the RAG layer without switching stacks.

RAG capabilities:

- 9 vector database options: ChromaDB, PGVector, Qdrant, Milvus, Elasticsearch, OpenSearch, Pinecone, S3Vector, Oracle 23ai

- Hybrid search with reranking

- Multiple content extraction engines: Tika, Docling, Mistral OCR

- Web search integration with 15+ providers

What sets it apart:

- Native Ollama integration with model management

- Document and web content RAG in single interface

- YouTube video transcription and search

- Active development with 60K+ GitHub stars

Limitations: The team acknowledges RAG implementation constraints and plans to rebuild the framework for more modularity. Current chunking and retrieval settings offer less granularity than dedicated RAG platforms.

When to choose OpenWebUI:

- Teams already using Ollama for local inference

- Developers wanting quick setup over deep customization

- Organizations prioritizing active community support

6. LibreChat

Best AnythingLLM alternative for multi-provider enterprise deployments

LibreChat serves as a unified interface across AI providers while adding enterprise-grade authentication and RAG capabilities. Daimler Truck deployed it company-wide, validating its production readiness at scale.

Enterprise features:

- Authentication: Discord, GitHub OAuth, Azure AD, AWS Cognito

- Multi-provider: OpenAI, Anthropic, AWS Bedrock, Azure, Google Vertex

- Role-based access with team workspaces

- Conversation search and export

RAG implementation:

- File uploads with automatic embedding

- Custom vector store configuration

- Citation support for grounded responses

- Plugin architecture for web search and tools

When to choose LibreChat:

- Organizations using multiple AI providers

- Teams needing enterprise SSO integration

- Companies requiring conversation audit trails

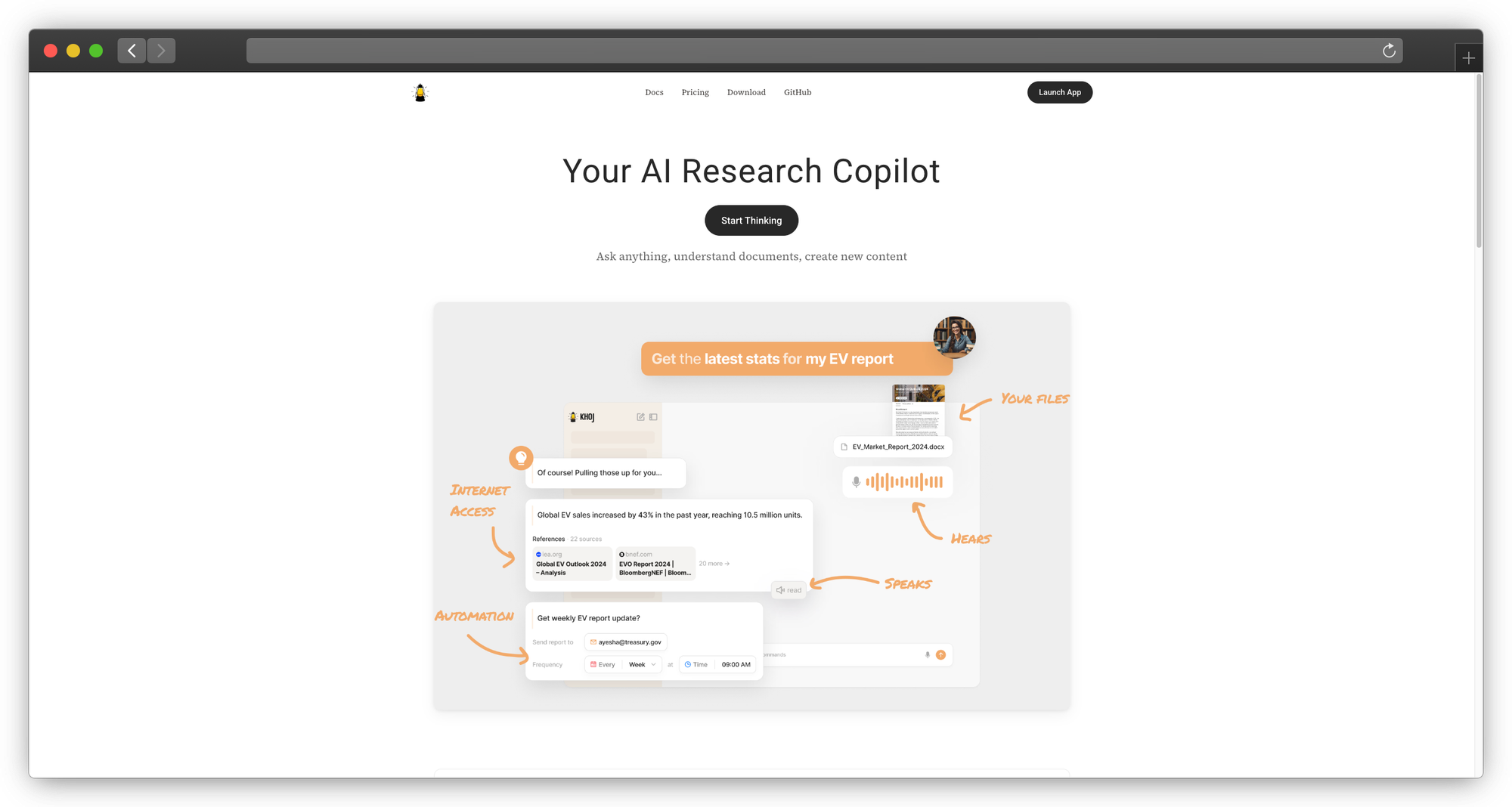

7. Khoj

Best for personal knowledge management

Khoj takes a different approach: personal AI assistant with deep integrations into note-taking tools. If your team uses Obsidian, Notion, or Logseq, Khoj indexes your existing notes and makes them searchable.

Integration focus:

- Obsidian plugin for local vault indexing

- Notion connector for team wikis

- GitHub for code and documentation

- Logseq for personal knowledge bases

Unique features:

- Natural language search across all connected sources

- Automatic context from your notes in conversations

- Desktop and mobile apps for anywhere access

- Self-hostable with local models

Limitations: Khoj optimizes for individual productivity rather than team collaboration. Access controls and multi-user features are limited compared to enterprise-focused alternatives.

When to choose Khoj:

- Teams using Obsidian or Notion heavily

- Individuals wanting AI over personal knowledge bases

- Developers building personal AI assistants

Khoj fits a different category: personal AI rather than document AI platform.

8. Quivr

Best for custom RAG pipelines

Quivr (YC W24) provides opinionated RAG that prioritizes speed and flexibility. The framework works with any LLM and any file format, letting teams customize retrieval without rebuilding core infrastructure.

Technical differentiators:

- Megaparse integration for file ingestion

- Custom parser support for proprietary formats

- Internet search as retrieval source

- Works with OpenAI, Anthropic, Mistral, Gemma, and local models

Enterprise deployment: Deploy to any cloud provider. No data leaves your datacenter. The system builds unified search across documents, tools, and databases.

Use cases from production:

- Email drafting without contextual input

- Extracting actionable data from large databases

- Summarizing extensive document sets

When to choose Quivr:

- Teams needing custom file parsers

- Organizations wanting YC-backed support and roadmap

- Developers building RAG applications requiring internet search alongside documents

9. Flowise

Best for low-code builders

Flowise brings visual programming to RAG and agent development. Drag document loaders, embedding models, vector stores, and LLMs onto a canvas. Connect them. Deploy as API. No orchestration code required.

Visual builder capabilities:

- 100+ nodes for document loaders, embeddings, retrievers, and models

- Document sources: PDF, Word, Google Drive, Playwright web scraping, Firecrawl

- Vector stores: Pinecone, Qdrant, Weaviate, ChromaDB, and others

- Built-in chunking strategies and retrieval optimization

Production features:

- Agentic RAG with iterative refinement

- Human-in-the-loop checkpoints for quality control

- API deployment for integration into existing systems

- Fully offline operation with local models

When to choose Flowise:

- Teams without dedicated ML engineering

- Rapid prototyping of document AI applications

- Organizations wanting visual debugging of RAG pipelines

Flowise trades coding flexibility for accessibility. If your team includes non-developers who need to build and modify RAG systems, this is the most practical choice.

10. LocalGPT

Best for offline-first privacy

LocalGPT 2.0 runs completely offline with no external dependencies. Unlike other platforms built on LangChain or LlamaIndex, LocalGPT maintains its own retrieval stack for full control over data handling.

Technical architecture:

- Hybrid search: semantic similarity + keyword matching + Late Chunking

- Document formats: PDF, DOCX, TXT, markdown

- Smart routing between RAG and direct LLM responses

- Query decomposition for complex questions

- Semantic caching with TTL for faster repeated queries

Advanced features:

- Contextual retrieval preserves document context around chunks

- Document-level summaries for overview queries

- Source attribution for all answers

- Batch processing for multiple documents

Hardware support: GPU, CPU, Intel Gaudi HPU, and Apple MPS. The platform optimizes for whatever hardware you have.

When to choose LocalGPT:

- Organizations requiring zero external dependencies

- Teams wanting hybrid search without third-party frameworks

- Developers who need complete control over retrieval pipeline

Decision Framework: Choosing the Right AnythingLLM Alternative

Start with your primary constraint:

| If You Need... | Choose | Why |

|---|---|---|

| Enterprise connectors | Danswer/Onyx | 40+ native integrations with access control sync |

| Visual workflow building | Dify or Flowise | No-code RAG pipeline design |

| Offline operation | PrivateGPT or LocalGPT | Zero network dependencies |

| Ollama integration | OpenWebUI | Native model management |

| Multi-provider support | LibreChat | Unified interface across OpenAI, Anthropic, Azure |

| Custom RAG pipelines | Quivr | YC-backed with flexible architecture |

| Personal knowledge | Khoj | Individual focus, not team collaboration |

| Fine-tuned models + compliance | Prem AI | Custom training with SOC 2, HIPAA, GDPR |

For enterprise deployments specifically:

- Connector-heavy environments: Danswer indexes existing SaaS tools without migration

- Compliance-first: Prem AI for certifications, PrivateGPT/LocalGPT for air-gapped

- Multi-team access: LibreChat or Dify with RBAC and SSO

- Rapid iteration: Flowise for non-developers building RAG prototypes

- Custom model quality: Prem AI for fine-tuning on domain documents

Building Production Document AI

Most AnythingLLM alternatives solve document chat. Production deployments need more:

Evaluation and testing: Before deploying any RAG system, establish evaluation frameworks to measure retrieval quality, answer accuracy, and latency. Without metrics, you can't improve.

Model selection: Your choice of embedding and generation models affects retrieval quality directly. Small models often outperform larger ones for domain-specific retrieval when fine-tuned properly.

Observability: Production RAG systems need observability tooling to trace queries through retrieval and generation. Without visibility into failures, debugging becomes guesswork.

Memory and context: Document retrieval is step one. Memory systems that maintain conversation context across sessions improve user experience significantly.

Security considerations: Enterprise AI security extends beyond data privacy. Prompt injection, model extraction, and output manipulation are real attack vectors in RAG systems.

Conclusion

AnythingLLM works for individual developers exploring document chat. The alternatives here serve different needs:

- Danswer for enterprises with documents in SaaS tools

- Dify and Flowise for visual RAG pipeline building

- PrivateGPT and LocalGPT for air-gapped privacy requirements

- OpenWebUI for Ollama-native deployments

- LibreChat for multi-provider enterprise environments

- Quivr for custom RAG architectures

- Khoj for personal knowledge management

- Prem AI for fine-tuned models with enterprise compliance

The right choice depends on your constraints: connector coverage, deployment requirements, team technical capacity, compliance needs, and whether generic models are sufficient or fine-tuning is required.

All open-source options listed here support self-hosting. For teams needing managed infrastructure with fine-tuning capabilities, dataset automation, and compliance certifications, Prem AI provides the enterprise layer that open-source tools lack.

What to Read Next

If you're evaluating RAG architectures:

- RAG vs Long-Context LLMs - When retrieval beats extended context

- LangChain Alternatives - 33 options for building without LangChain

If you're deploying self-hosted:

- Private LLM Deployment Guide - Infrastructure decisions for on-premise AI

- Self-Hosted LLM Setup - Hardware, tools, and cost breakdown

If you're building AI agents:

- Chatbots vs AI Agents - Understanding the architectural differences

- Agentic Frameworks - LangGraph, CrewAI, and alternatives