15 Best AI Agent Frameworks for Enterprise: Open-Source to Managed (2026)

TL;DR: This guide ranks 15 production-ready AI agent frameworks across orchestration, observability, and managed platforms. Top picks: LangGraph for complex stateful workflows, CrewAI for role-based teams, OpenAI Agents SDK for OpenAI-native apps. Each framework is evaluated on multi-agent support, state management, human-in-the-loop, and enterprise readiness.

AI agent frameworks let you build systems that reason, plan, use tools, and take actions autonomously. But choosing the wrong framework means rewriting your architecture when you hit production limits: no state persistence, no observability, no human approval workflows.

The 2026 landscape includes 50+ frameworks. Most won't survive contact with enterprise requirements. This guide ranks 15 AI agent frameworks that matter for production deployments, categorized by what they actually do:

- Orchestration frameworks (10): Build and run agents

- Observability platforms (3): Monitor and debug agents

- Managed platforms (2): Full-stack agent infrastructure

We evaluated each on multi-agent support, state management, human-in-the-loop capabilities, observability, and enterprise deployment options.

Quick Comparison: Best AI Agent Frameworks

| Rank | Framework | Category | Best For | License | Learning Curve |

|---|---|---|---|---|---|

| 1 | LangGraph | Orchestration | Complex stateful workflows | MIT | Medium |

| 2 | CrewAI | Orchestration | Role-based multi-agent teams | MIT | Low |

| 3 | OpenAI Agents SDK | Orchestration | OpenAI-native production apps | MIT | Low |

| 4 | AutoGen | Orchestration | Conversational multi-agent | MIT | Medium |

| 5 | LlamaIndex | Orchestration | Data/RAG-centric agents | MIT | Medium |

| 6 | Pydantic AI | Orchestration | Type-safe structured outputs | MIT | Low |

| 7 | Semantic Kernel | Orchestration | Enterprise .NET integration | MIT | Medium |

| 8 | Smolagents | Orchestration | Minimalist code execution | Apache 2.0 | Low |

| 9 | Agno | Orchestration | High-performance runtime | MIT | Medium |

| 10 | Swarms | Orchestration | Large-scale orchestration | MIT | High |

| 11 | LangSmith | Observability | LangChain ecosystem | Proprietary | Low |

| 12 | Langfuse | Observability | Open-source monitoring | MIT | Low |

| 13 | AgentOps | Observability | Agent-specific tracing | Proprietary | Low |

| 14 | Amazon Bedrock Agents | Managed | AWS-native deployment | Proprietary | Medium |

| 15 | Vertex AI Agent Builder | Managed | Google Cloud integration | Proprietary | Medium |

Part 1: Orchestration Frameworks

Orchestration is where most teams start when choosing an AI agent framework. These frameworks help you build AI agents. They handle the core loop: reasoning, tool selection, execution, and state management.

#1: LangGraph

Best for complex stateful workflows.

LangGraph extends LangChain with graph-based orchestration. Each agent step is a node. Edges control data flow and transitions. This architecture handles complex branching, error recovery, and long-running operations better than linear chains.

Key features:

- Graph-based workflow definition

- Built-in state persistence and checkpointing

- Human-in-the-loop interrupts at any node

- Streaming support for real-time outputs

- LangSmith integration for observability

When to use: Multi-step reasoning, workflows with conditional branching, agents that need to pause and resume, production systems requiring state durability.

Limitations: Steeper learning curve than simpler frameworks. Overkill for single-turn agents.

from langgraph.graph import StateGraph, END

from typing import TypedDict

class AgentState(TypedDict):

messages: list

next_action: str

def reasoning_node(state: AgentState) -> AgentState:

# Agent reasoning logic

return {"next_action": "execute_tool"}

def tool_node(state: AgentState) -> AgentState:

# Tool execution

return {"next_action": "respond"}

# Build graph

workflow = StateGraph(AgentState)

workflow.add_node("reason", reasoning_node)

workflow.add_node("execute", tool_node)

workflow.add_edge("reason", "execute")

workflow.add_edge("execute", END)

For alternatives to LangChain ecosystem, see 33 LangChain alternatives.

#2: CrewAI

Best for role-based multi-agent teams.

CrewAI lets you define agents with specific roles, goals, and backstories. Agents collaborate on tasks, delegating work based on expertise. The mental model is a team of specialists working together.

Key features:

- Role-based agent definition

- Task delegation between agents

- Built-in memory (short-term, long-term, entity)

- Process types: sequential, hierarchical, parallel

- Standalone framework with 100+ built-in tools

When to use: Workflows requiring multiple specialized agents, content production pipelines, research tasks with distinct phases.

Limitations: Less control over individual agent steps than LangGraph. Role definitions require careful prompt engineering.

from crewai import Agent, Task, Crew

researcher = Agent(

role="Research Analyst",

goal="Find accurate information on the topic",

backstory="Expert at finding and synthesizing information"

)

writer = Agent(

role="Content Writer",

goal="Create clear, engaging content",

backstory="Skilled technical writer"

)

research_task = Task(

description="Research the topic thoroughly",

agent=researcher

)

write_task = Task(

description="Write article based on research",

agent=writer

)

crew = Crew(agents=[researcher, writer], tasks=[research_task, write_task])

result = crew.kickoff()

For understanding when to use agents vs simpler chatbots, see chatbots vs AI agents.

#3: OpenAI Agents SDK

Best for OpenAI-native production apps.

The OpenAI Agents SDK is the production-ready evolution of Swarm. It provides official support for building agents with OpenAI models, including built-in guardrails, handoffs, and tracing.

Key features:

- Official OpenAI support and maintenance

- Built-in guardrails for input/output validation

- Agent handoffs for multi-agent workflows

- Tracing and observability dashboard

- Human-in-the-loop support

- TypeScript/JavaScript SDK also available

When to use: Production applications using OpenAI models, teams wanting official support and documentation, applications requiring built-in safety guardrails.

Limitations: Optimized for OpenAI ecosystem. Works with other providers via Chat Completions API but best with OpenAI models.

from agents import Agent, Runner, function_tool

@function_tool

def search_database(query: str) -> str:

"""Search the knowledge base."""

# Your database search logic here

return f"Results for: {query}"

agent = Agent(

name="support_agent",

instructions="Help users with their questions. Be concise and helpful.",

tools=[search_database]

)

result = Runner.run_sync(agent, "How do I reset my password?")

print(result.final_output)

Note: OpenAI announced AgentKit at DevDay (October 2025) as an expanded toolkit for enterprise agent deployment. The Agents SDK remains the core framework, with AgentKit adding visual development tools and enterprise features.

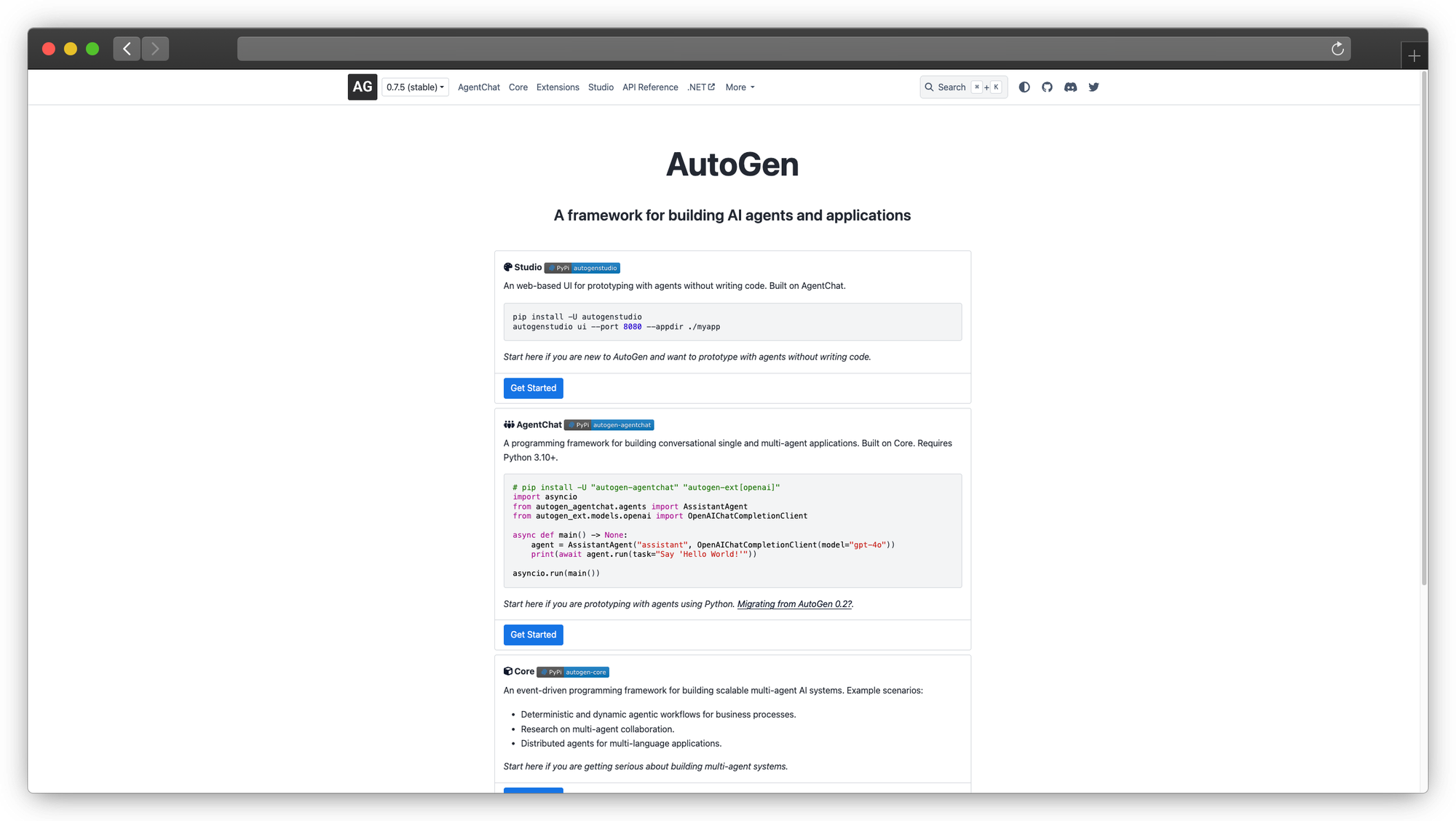

#4: AutoGen (Microsoft)

Best for conversational multi-agent systems.

AutoGen treats workflows as conversations between agents. Each agent can be an LLM, a human, or a tool. This conversational paradigm is intuitive for chat-based applications.

Key features:

- Conversation-based orchestration

- Flexible agent types (AI, human, hybrid)

- Code execution in sandboxed environments

- Group chat with multiple agents

- Human-in-the-loop at conversation level

When to use: Applications where natural conversation flow matters, systems requiring human participation alongside AI agents, code generation and execution workflows.

Limitations: Conversation metaphor can be limiting for non-chat workflows. Debugging multi-agent conversations is challenging.

Update (October 2025): Microsoft announced plans to merge AutoGen with Semantic Kernel into a unified "Microsoft Agent Framework" with GA expected Q1 2026. Both frameworks remain usable independently, but expect convergence in future releases.

from autogen import AssistantAgent, UserProxyAgent

assistant = AssistantAgent(

name="assistant",

llm_config={"model": "gpt-4"}

)

user_proxy = UserProxyAgent(

name="user",

human_input_mode="TERMINATE",

code_execution_config={"work_dir": "workspace"}

)

user_proxy.initiate_chat(

assistant,

message="Write a Python script to analyze sales data"

)

#5: LlamaIndex

Best for data-centric and RAG applications.

LlamaIndex specializes in connecting LLMs to data. Its agent capabilities focus on querying, retrieving, and reasoning over documents and databases.

Key features:

- Native RAG integration

- Query engines as agent tools

- Multi-document reasoning

- Structured data agents (SQL, Pandas)

- Extensive data connector library (100+ connectors)

When to use: Agents that primarily interact with data, RAG-heavy applications, document QA systems, database query agents.

Limitations: Less suited for general-purpose agents that don't focus on data retrieval. Heavier abstraction than some alternatives.

from llama_index.core.agent import ReActAgent

from llama_index.llms.openai import OpenAI

from llama_index.core.tools import QueryEngineTool

# Assuming you have a query engine set up

query_tool = QueryEngineTool.from_defaults(

query_engine=your_query_engine,

name="knowledge_base",

description="Search the company knowledge base"

)

agent = ReActAgent.from_tools(

tools=[query_tool],

llm=OpenAI(model="gpt-4"),

verbose=True

)

response = agent.chat("What were our Q3 revenue figures?")

For building RAG pipelines that agents can use, see building RAG pipeline.

#6: Pydantic AI

Best for type-safe production systems.

Pydantic AI brings Pydantic's validation philosophy to agents. Structured outputs are enforced at the type level. If the LLM returns invalid data, the framework requests a retry.

Key features:

- Type-safe structured outputs

- Model-agnostic (works with any provider)

- Durable execution for long-running agents

- Streaming with immediate validation

- Graph support for complex workflows

When to use: Applications requiring guaranteed output schemas, production systems where type safety matters, teams already using Pydantic.

Limitations: Python-only. Structured outputs add latency for retry handling.

from pydantic_ai import Agent

from pydantic import BaseModel

class SupportResponse(BaseModel):

answer: str

confidence: float

sources: list[str]

agent = Agent(

model="openai:gpt-4",

result_type=SupportResponse, # Enforced output type

system_prompt="You are a helpful support agent"

)

result = agent.run_sync("How do I upgrade my plan?")

# result.data is guaranteed to be SupportResponse

print(result.data.answer)

print(result.data.confidence)

#7: Semantic Kernel (Microsoft)

Best for enterprise .NET integration.

Semantic Kernel integrates LLMs with conventional programming. It's designed for enterprise environments, particularly those using .NET and Azure.

Key features:

- First-class .NET/C# support

- Plugin architecture for extensibility

- Azure OpenAI integration

- Memory and planning capabilities

- Enterprise-grade security patterns

When to use: Enterprise .NET environments, Azure-centric deployments, teams with C# expertise, applications requiring Microsoft ecosystem integration.

Limitations: Python support exists but .NET is the primary focus. Smaller community than LangChain ecosystem.

Update (October 2025): Microsoft announced plans to merge Semantic Kernel with AutoGen. For new .NET projects, Semantic Kernel remains the recommended starting point with the understanding that it will evolve into the unified Microsoft Agent Framework.

using Microsoft.SemanticKernel;

using Microsoft.SemanticKernel.Agents;

var kernel = Kernel.CreateBuilder()

.AddAzureOpenAIChatCompletion(deploymentName, endpoint, apiKey)

.Build();

var agent = new ChatCompletionAgent

{

Name = "SupportAgent",

Instructions = "You are a helpful support agent",

Kernel = kernel

};

var response = await agent.InvokeAsync("How do I reset my password?");

#8: Smolagents (Hugging Face)

Best for minimalist code-centric agents.

Smolagents takes a radically simple approach: the agent writes and executes Python code to achieve goals. No complex abstractions. Just a loop of code generation and execution.

Key features:

- Code-first approach

- Minimal abstractions

- Direct Python library access

- Small, self-contained agents

- Hugging Face model integration

- MCP (Model Context Protocol) support

When to use: Quick prototyping, agents that primarily need to run computations, scenarios where you want agents to write code rather than use predefined tools.

Limitations: Code execution requires sandboxing for production. Less structured than tool-based frameworks.

from smolagents import CodeAgent, HfApiModel

agent = CodeAgent(

tools=[],

model=HfApiModel()

)

result = agent.run("Calculate the compound interest on $10000 at 5% for 10 years")

# Agent writes and executes Python code to compute the answer

For lightweight model options, see best lightweight language models.

#9: Agno

Best for high-performance multi-agent systems.

Agno (formerly Phidata) focuses on performance. Where other frameworks take seconds to instantiate agents, Agno does it in microseconds. Independent benchmarks show ~50x lower memory usage than LangGraph and ~10,000x faster instantiation.

Key features:

- Microsecond agent instantiation

- ~50x lower memory than alternatives (per Agno benchmarks)

- Concurrent agent execution

- Optional managed platform (AgentOS)

- Session and state management

- MCP server support

When to use: High-throughput applications, scenarios requiring thousands of concurrent agents, latency-sensitive systems.

Limitations: Newer framework with smaller community. Some features require their managed platform.

from agno.agent import Agent

from agno.models.openai import OpenAIChat

from agno.tools.duckduckgo import DuckDuckGoTools

agent = Agent(

name="Research Agent",

model=OpenAIChat(id="gpt-4o"),

tools=[DuckDuckGoTools()],

instructions="Always include sources",

markdown=True

)

agent.print_response("What are the latest developments in AI agents?", stream=True)

#10: Swarms

Best for large-scale orchestration.

Swarms is designed for orchestrating many agents at enterprise scale. It supports various multi-agent architectures: sequential, hierarchical, parallel, and swarm-based.

Key features:

- Multiple orchestration patterns

- Scale to thousands of agents

- Production-focused design

- Integration with enterprise tools

- Extensive documentation

When to use: Large-scale agent deployments, complex orchestration requirements, enterprise production systems.

Limitations: Steeper learning curve. May be overkill for simple agent applications.

from swarms import Agent, SequentialWorkflow

# Define specialized agents

researcher = Agent(

agent_name="Researcher",

system_prompt="You research topics thoroughly",

model_name="gpt-4"

)

writer = Agent(

agent_name="Writer",

system_prompt="You write clear, engaging content",

model_name="gpt-4"

)

# Create workflow

workflow = SequentialWorkflow(

agents=[researcher, writer],

max_loops=1

)

result = workflow.run("Write a report on AI agent frameworks")

For enterprise deployment patterns, see small models big wins in agentic AI.

Part 2: Observability Platforms

Building agents is half the battle. Debugging them in production requires specialized observability.

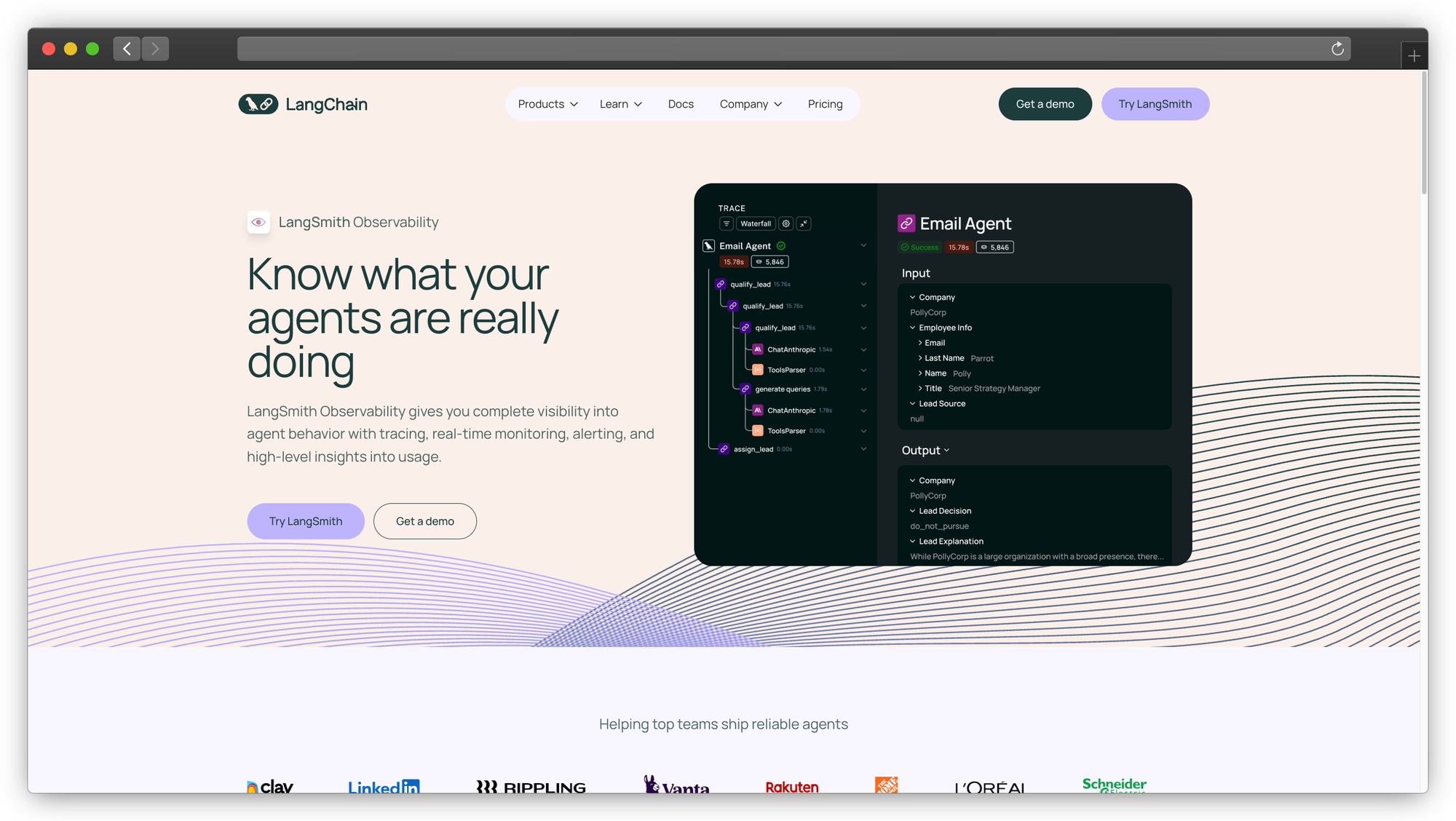

#11: LangSmith

Best observability platform for LangChain ecosystem.

LangSmith provides tracing, monitoring, and evaluation for LLM applications. If you're using LangGraph or LangChain, it's the natural observability choice.

Key features:

- End-to-end trace visualization

- Prompt versioning and testing

- Evaluation datasets and metrics

- Alerting on anomalies

- Self-hosted and cloud options

Best for: LangChain/LangGraph users, teams needing comprehensive LLM observability, production debugging.

Pricing: Free tier available. Paid plans for production usage.

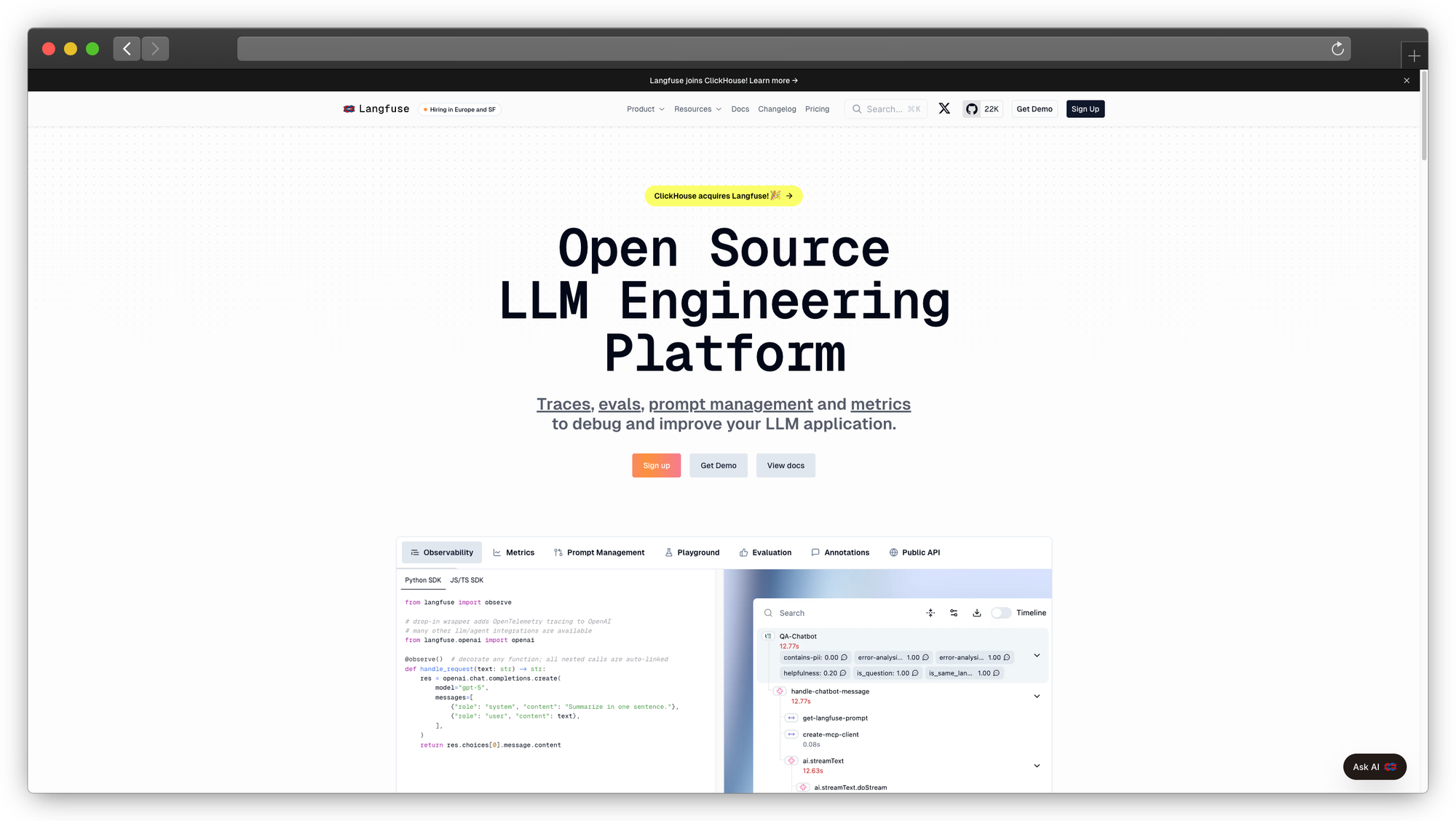

#12: Langfuse

Best open-source observability for AI agents.

Langfuse is an open-source alternative to LangSmith. Self-host for full data control or use their cloud offering.

Key features:

- Open-source (MIT license)

- Framework-agnostic integration

- Prompt management

- Cost tracking

- Self-hosted deployment option

Best for: Teams requiring data control, open-source preference, multi-framework environments.

For evaluation best practices, see enterprise AI evaluation.

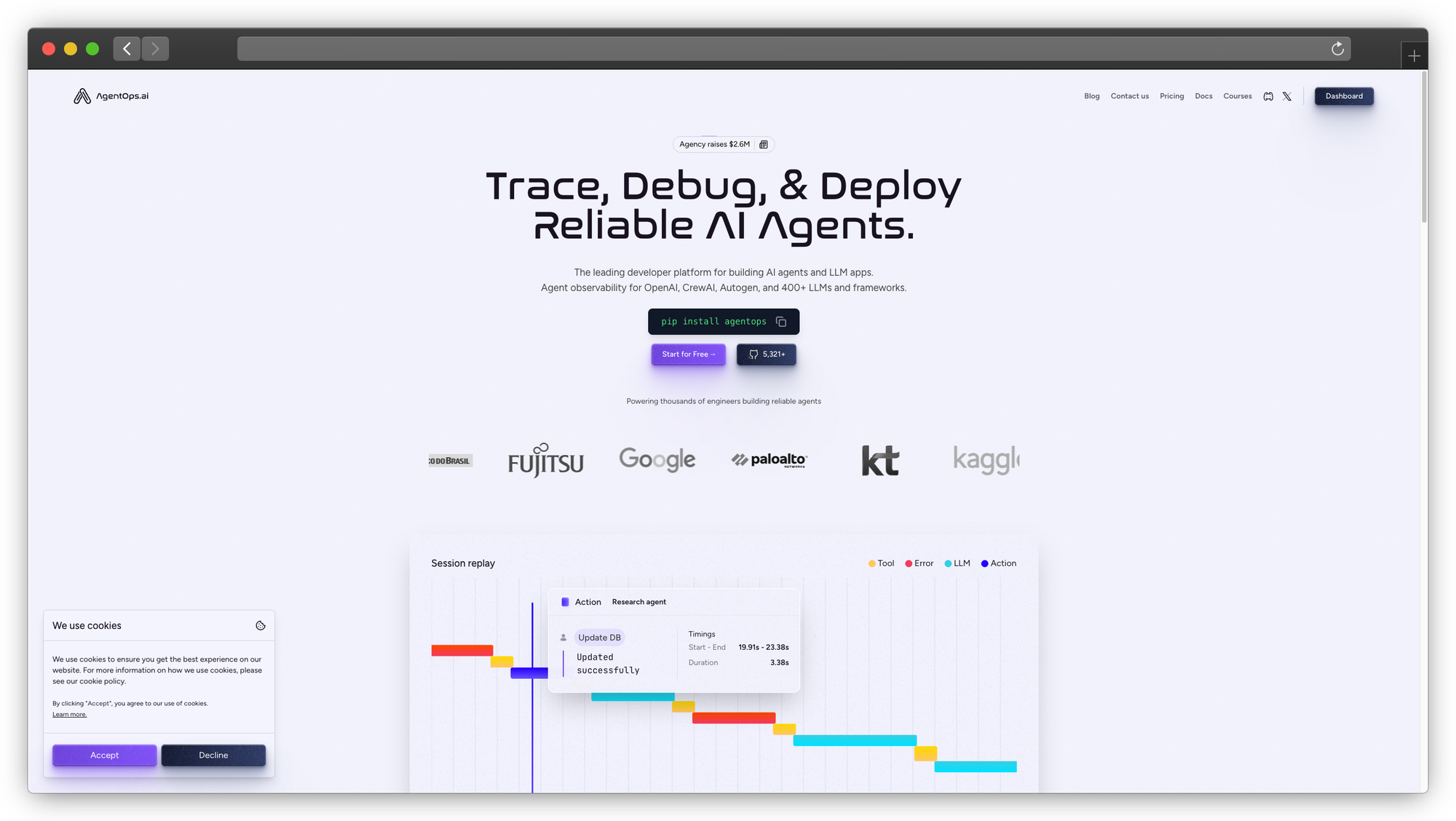

#13: AgentOps

Best observability for autonomous agent systems.

AgentOps is purpose-built for agent observability. It tracks agent decisions, tool usage, and multi-step reasoning patterns that general LLM observability tools miss.

Key features:

- Agent-specific instrumentation

- Decision tree visualization

- Tool call monitoring

- Multi-step reasoning traces

- Session replay

Best for: Complex autonomous agents, debugging multi-step failures, understanding agent decision patterns.

For reliability monitoring, see LLM reliability and evaluation.

Part 3: Managed Platforms

For teams that want agents without managing infrastructure.

#14: Amazon Bedrock Agents

Best managed platform for AWS environments.

Bedrock Agents provides fully managed agent infrastructure on AWS. Define agents, connect to data sources, and deploy without managing servers.

Key features:

- Fully managed infrastructure

- Knowledge base integration

- Action groups for tool execution

- AWS service integration

- Enterprise security and compliance

Best for: AWS-native organizations, teams without ML infrastructure expertise, enterprise compliance requirements.

Limitations: AWS lock-in. Less flexibility than open-source options.

#15: Vertex AI Agent Builder

Best managed platform for Google Cloud.

Vertex AI Agent Builder is Google's managed agent platform. Build conversational agents with Google's models and infrastructure.

Key features:

- Google Cloud integration

- Gemini model access

- Data connectors for Google services

- Enterprise search capabilities

- Managed scaling

Best for: Google Cloud organizations, teams using Google Workspace, applications requiring Google service integration.

Limitations: Google Cloud lock-in. Gemini-focused model selection.

For self-hosted alternatives, see self-hosted LLM guide.

Decision Framework: Choosing Your AI Agent Framework

| If you need... | Choose | Why |

|---|---|---|

| Complex stateful workflows | LangGraph | Graph-based, best state management |

| Multi-agent role collaboration | CrewAI | Intuitive team metaphor |

| OpenAI production deployment | OpenAI Agents SDK | Official support, built-in guardrails |

| Data/RAG-centric agents | LlamaIndex | Native data integration |

| Type-safe outputs | Pydantic AI | Enforced schemas |

| .NET enterprise integration | Semantic Kernel | First-class C# support |

| High-performance runtime | Agno | 50x lower memory |

| Minimal abstraction | Smolagents | Code-first simplicity |

| Large-scale orchestration | Swarms | Enterprise scale |

| AWS managed infrastructure | Bedrock Agents | Fully managed |

| Google Cloud integration | Vertex AI | GCP native |

Start Here

For most enterprise teams: Start with LangGraph + LangSmith. The combination provides the control you need for production with observability built in.

For rapid prototyping: CrewAI or Smolagents get you to working agents fastest.

For OpenAI-focused apps: OpenAI Agents SDK provides the smoothest path with official support.

For data-heavy applications: LlamaIndex if your agents primarily query and reason over documents.

For Microsoft/.NET shops: Semantic Kernel today, with an eye toward the unified Microsoft Agent Framework in 2026.

Building Enterprise-Ready Agents

Regardless of which AI agent framework you choose, production agents need:

1. State persistence - Agents that can pause, resume, and recover from failures. LangGraph and Pydantic AI handle this well.

2. Human-in-the-loop - Approval workflows for high-stakes actions. Most frameworks support this, but implementation varies.

3. Observability - You can't debug what you can't see. Add LangSmith, Langfuse, or AgentOps early.

4. Memory systems - Agents that remember context across sessions. For sophisticated memory, see Prem Cortex.

5. Security guardrails - Input validation, output filtering, access controls. See our enterprise AI security guide for implementation.

For teams building agents on private infrastructure with managed fine-tuning, Prem Studio handles model deployment and optimization.

Book a technical call to discuss agent architecture for your use case.

Frequently Asked Questions

What is the best AI agent framework in 2026?

It depends on your use case. LangGraph leads for complex stateful workflows, CrewAI excels at role-based multi-agent teams, and OpenAI Agents SDK is best for OpenAI-native production apps. See our decision framework above.

LangGraph vs CrewAI: which should I choose?

Choose LangGraph if you need fine-grained control over agent steps, complex branching logic, or robust state persistence. Choose CrewAI if you prefer the intuitive "team of specialists" metaphor and want faster prototyping with role-based agents.

Are these AI agent frameworks production-ready?

LangGraph, CrewAI, OpenAI Agents SDK, and Pydantic AI are all used in production environments. Key requirements for production: state persistence, observability, human-in-the-loop controls, and proper error handling.

What happened to OpenAI Swarm?

Swarm was an experimental/educational framework. OpenAI replaced it with the production-ready Agents SDK in March 2025, and expanded capabilities with AgentKit announced at DevDay October 2025.

Is Microsoft merging AutoGen and Semantic Kernel?

Yes. Microsoft announced in October 2025 that AutoGen and Semantic Kernel will merge into a unified "Microsoft Agent Framework" with GA expected Q1 2026. Both frameworks remain usable independently during the transition.

What to Read Next

Your AI agent framework choice is just the start. Here's what to read based on your next steps:

Understanding agent architectures:

- Chatbots vs AI Agents - when to use which

- Small Models Big Wins - efficient agent deployment

Building agent components:

- Building RAG Pipeline - data retrieval for agents

- How to Fine-Tune AI Models - customizing agent behavior

Production deployment:

- Private LLM Deployment - infrastructure options

- Enterprise AI Evaluation - testing agents