PremAI vs Google Vertex AI: Privacy, Flexibility, and Cost Compared

Google Vertex AI is growing fast in enterprise. Gemini models, Vertex AI Studio for prompt design, deep GCP integration. For cloud-native teams on Google Cloud, it’s a compelling platform.

Then you ask the hard question: Can I run this on my own infrastructure?

Google’s answer is no.

Vertex AI is cloud-only. Your data goes to Google. Your models run on Google. Your costs scale with Google’s pricing. There is no on-premise option. There is no air-gapped deployment.

There is no “run it yourself.”

For many enterprises, this is fine. GCP handles the infrastructure. You focus on building applications.

But for teams with data sovereignty requirements, on-premise mandates, or cost concerns at high volume, that’s a significant limitation.

This comparison covers both platforms with their actual tradeoffs. Vertex AI has real strengths. PremAI has different strengths. Your requirements determine which fits.

Platform Overview

Google Vertex AI:

Google’s unified ML platform offering Gemini models (1.5 Pro, 1.5 Flash, 2.0), plus access to Model Garden with 150+ models including open models like Llama and Mistral. Includes Vertex AI Studio for prompt engineering, AutoML for non-ML teams, and Vertex AI Pipelines for MLOps.

Deep integration with GCP services: BigQuery ML runs models directly on your data warehouse, Cloud Storage provides seamless data access, and Pub/Sub enables streaming inference.

PremAI:

Self-hosted or managed AI infrastructure that runs anywhere. Supports any model (Llama, Mistral, Phi, Gemini via API, OpenAI via API, custom fine-tunes). True on-premise deployment available where data never leaves your infrastructure. Managed option operates under Swiss jurisdiction for data sovereignty.

The fundamental difference:

Vertex AI is GCP-native. Your data lives in Google Cloud. Your models run on Google’s TPUs and GPUs.

PremAI can run anywhere. Including your own servers, your own data centers, or air-gapped environments.

This isn’t about which is “better.” It’s about matching architecture to requirements.

Feature Comparison

| Category | Google Vertex AI | PremAI | Winner |

|---|---|---|---|

| On-Premise Option | None | Full support | PremAI |

| Air-Gapped Deployment | None | Supported | PremAI |

| Cloud Deployment | GCP only | Any cloud + on-prem | PremAI |

| Model Selection | Gemini, PaLM, Model Garden (150+ models) | Any model | Tie |

| Data Residency | GCP regions (US jurisdiction) | Your infra or Swiss | PremAI |

| ML Tooling | Excellent (Studio, AutoML, Pipelines) | API-focused | Vertex |

| Fine-Tuning | Gemini, PaLM, open models | Any model, any method | Tie |

| BigQuery Integration | Native (BigQuery ML) | Via APIs | Vertex (for GCP users) |

| Pricing Model | Complex (compute + tokens + storage) | Transparent | PremAI |

| Vendor Lock-in | High (GCP ecosystem) | Low | PremAI |

| Enterprise Support | Google Cloud support | Direct support | Tie |

| Compliance Certs | SOC 2, HIPAA, ISO, FedRAMP | SOC 2, GDPR, HIPAA | Tie |

Where Vertex AI Wins

ML Tooling:

Vertex AI Studio provides a visual interface for prompt engineering, model evaluation, and deployment. You can test prompts, compare outputs across models, and deploy to production without leaving the UI.

AutoML enables teams without ML expertise to train custom models. Upload labeled data, AutoML handles feature engineering, model selection, and hyperparameter tuning. This democratizes ML within organizations.

Vertex AI Pipelines handles MLOps workflows: training orchestration, model versioning, A/B testing, monitoring. For teams building complex ML systems, this infrastructure is significant.

Google Ecosystem:

If you’re a GCP shop, Vertex integrates seamlessly:

- BigQuery ML: Run ML models directly on your data warehouse without moving data

- Cloud Storage: Native access to training data and model artifacts

- Pub/Sub: Streaming inference with built-in message handling

- Cloud Run: Serverless inference deployment

- Single billing and IAM across services

For organizations standardized on GCP, this integration reduces friction.

Model Garden:

150+ models available for deployment, including open models like Llama 2/3 and Mistral. One-click deployment to Vertex endpoints. This breadth is useful for experimentation and production deployment.

The catch: You’re still running on GCP infrastructure. Model Garden doesn’t change the cloud-only limitation.

Gemini Models:

Gemini 1.5 Pro offers 1M token context (extendable to 2M). This is the longest production context window available. For use cases involving long documents, extensive code analysis, or complex multi-turn conversations, this capability matters.

Gemini 2.0 brings improved reasoning and multimodal capabilities. If you specifically need Gemini, Vertex AI is the enterprise path.

Where PremAI Wins

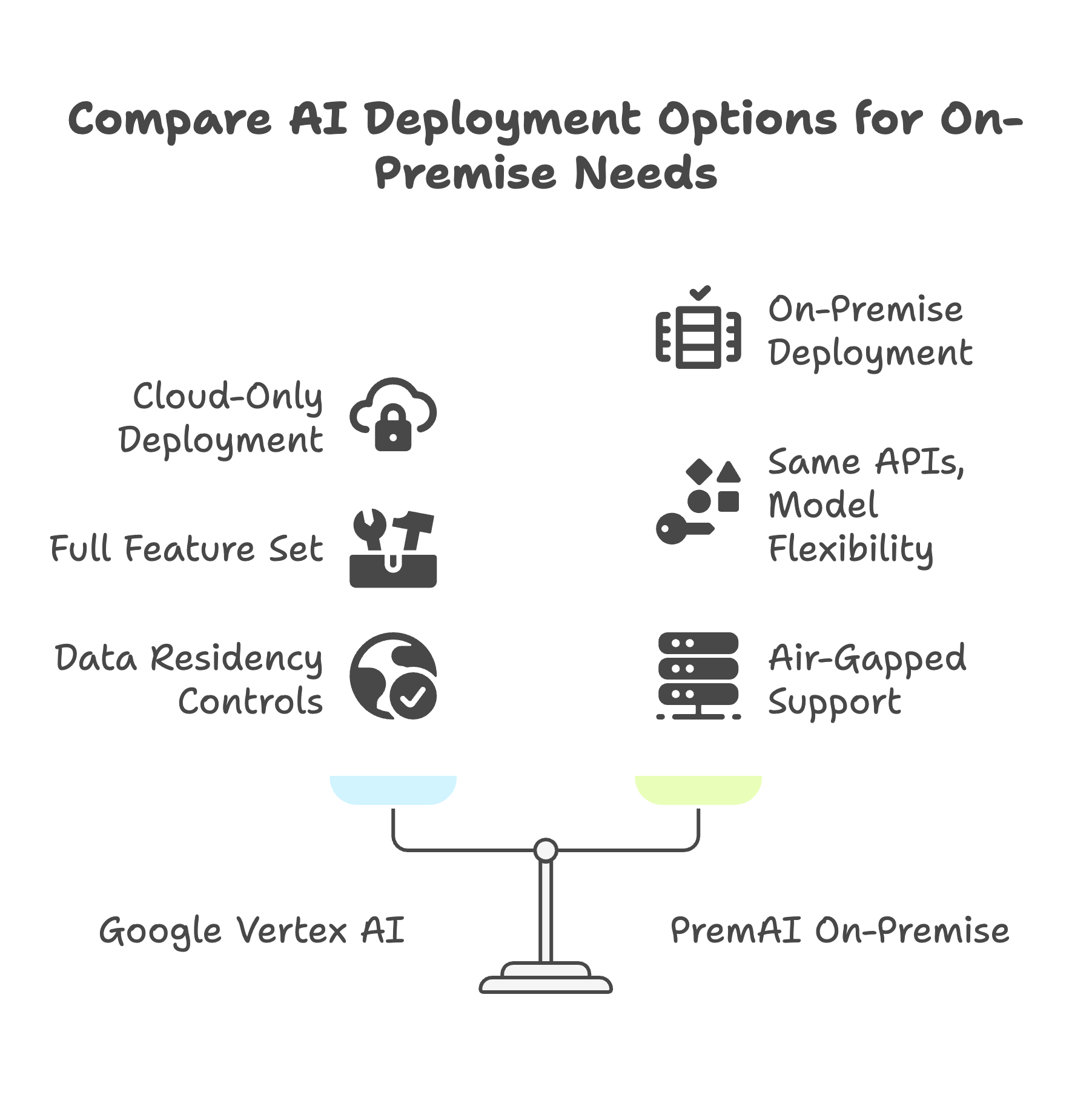

On-Premise Deployment:

This is the core differentiator. Vertex AI has no on-prem option.

Google Distributed Cloud (Anthos) exists for edge GKE deployments, but it does NOT include Vertex AI services. You cannot run Gemini on your own servers. You cannot run Model Garden on-prem. You cannot run Vertex AI Studio in your data center.

There is no workaround. Google does not offer this capability.

PremAI deploys to your data center with the same APIs as the managed version:

- Full platform on your hardware

- Same APIs as managed version

- Air-gapped support (no internet connectivity required)

- No data leaves your network

Why this matters:

- Healthcare: Some HIPAA interpretations require on-prem for PHI

- Finance: Trading systems often mandate air-gapped infrastructure

- Defense: Classified workloads need isolated infrastructure

- EU enterprises: GDPR plus CLOUD Act concerns drive on-prem requirements

- Manufacturing: Edge AI at factory locations without reliable internet

Cloud Agnostic:

Vertex AI runs on GCP only. You’re committing to Google’s ecosystem.

PremAI runs on AWS, Azure, GCP, on-premise, or hybrid configurations. No cloud vendor lock-in.

This matters for:

- Multi-cloud strategies (spreading risk across providers)

- Migration flexibility (can move infrastructure without changing AI stack)

- Negotiation leverage (not locked into a single vendor)

- Regulated industries (some regulations require multi-cloud capability)

Data Sovereignty:

Vertex AI: Google is a US company. US CLOUD Act applies regardless of region selection. Even with EU region selection, US law enforcement can compel access.

The difference between data residency and data sovereignty:

- Residency: Where data is physically stored

- Sovereignty: What legal jurisdiction governs access to that data

Vertex AI provides residency (EU regions available). It does not provide sovereignty (US law still applies).

PremAI alternatives:

- Self-hosted: Your jurisdiction, full control

- Managed (Swiss): Swiss FADP applies, EU adequacy, no US intelligence sharing agreements

Cost at Scale:

Vertex AI pricing is complex. Costs include:

- Per-token charges (vary by model)

- Compute costs for training and fine-tuning

- Storage costs for models and data

- Prediction compute hours

- Network egress

Vertex AI Pricing (February 2026):

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| Gemini 1.5 Pro | $1.25 | $5.00 |

| Gemini 1.5 Flash | $0.075 | $0.30 |

| Gemini 2.0 Flash | $0.10 | $0.40 |

| text-embedding-004 | $0.025 | - |

Additional costs:

- Training compute: Varies by TPU/GPU type

- Storage: $0.02-0.04 per GB/month

- Egress: $0.08-0.12 per GB

Total cost complexity:

Predicting Vertex AI monthly spend requires tracking: token usage by model, training compute hours, prediction compute hours, storage GB, and network egress. Multiple pricing dimensions make forecasting difficult.

PremAI pricing:

- Transparent per-seat or usage-based model

- Self-hosted: Your infrastructure costs only

- No hidden token markup

- Fine-tuning included

Cost Example: 10M tokens/day

| Component | Vertex AI (Gemini 1.5 Pro) | PremAI (Self-Hosted Llama 3.3) |

|---|---|---|

| Inference | ~$62/day | $0 (your hardware) |

| Infrastructure | Included | ~$30/day (H100 spot) |

| Fine-tuning | Per-hour compute | Included |

| Egress | Variable | $0 |

| Monthly Total | ~$2,500-3,500 | ~$1,000-1,500 |

The On-Premise Gap

This is the core differentiator. Let’s be explicit about what Google does and doesn’t offer.

What Google DOES offer:

- Vertex AI on GCP (all regions)

- Model Garden with 150+ models (cloud deployment)

- Vertex AI Studio for development

- Anthos for on-prem Kubernetes (GKE clusters)

- Google Distributed Cloud for edge scenarios

What Google does NOT offer:

- Vertex AI services on-premise

- Gemini running in your data center

- Model Garden deployment to your infrastructure

- Vertex AI Studio on your network

- Any path to run Vertex AI capabilities outside GCP

Google Distributed Cloud:

Google offers Anthos for edge and on-premise Kubernetes. Teams sometimes assume this includes Vertex AI. It does not.

Anthos provides GKE (Kubernetes) on your infrastructure. You can run containers. You cannot run Vertex AI services. The Gemini model is not available for on-prem deployment.

Why this matters:

Some workloads require on-premise deployment. Not as a preference. As a requirement.

- Classified workloads: Cannot connect to external networks

- Healthcare PHI: Some interpretations require on-prem

- Financial trading: Latency and IP protection requirements

- Manufacturing OT: Air-gapped operational technology environments

- Government: Sovereign cloud requirements in many countries

If you have on-prem requirements and want Vertex AI capabilities, you need an alternative path.

The workarounds Vertex users try:

- Self-host open models on GCE/GKE: Works, but loses Vertex AI features (Studio, Pipelines, AutoML). You’re just running vLLM on GCP.

- Use Vertex for training, self-host for inference: Complex architecture. Training data still goes to Google. Loses integration benefits.

- Accept cloud-only with data residency controls: Doesn’t solve sovereignty. US CLOUD Act still applies.

None of these give you Vertex AI capabilities on your own infrastructure.

PremAI on-premise:

- Full platform on your hardware

- Same APIs as managed version

- Air-gapped support (no internet required)

- No data leaves your network

- Model flexibility (run any model you want)

Data Handling Comparison

| Aspect | Vertex AI | PremAI (Self-Hosted) | PremAI (Managed) |

|---|---|---|---|

| Data Location | GCP region | Your servers | Swiss |

| Legal Jurisdiction | US (CLOUD Act) | Your jurisdiction | Swiss (FADP) |

| Data Retention | 30-55 days (abuse monitoring) | Your policy | Your policy |

| Training Data Use | Paid users excluded | Never | Never |

| Model Logs | GCP logging | Your logging | Your logging |

| Audit Access | GCP audit logs | Full control | Full logs |

| Air-Gapped | Not available | Supported | No (needs connectivity) |

Google’s data policies:

From Google’s documentation:

- Prompts and responses may be retained 30-55 days for abuse monitoring

- Paid API users are excluded from training data use

- Data is processed in your selected region

- Human review possible for flagged content

These policies are reasonable. Google has invested significantly in data protection. But US jurisdiction applies.

The jurisdiction issue:

Google is a US company. The US CLOUD Act (2018) allows US law enforcement to compel access to data stored anywhere. “EU region” selection does not change this legal reality.

EU regulators have expressed concern about this gap between residency and sovereignty. Some EU financial regulators are issuing guidance requiring sovereignty, not just residency.

Swiss alternative:

PremAI’s managed offering operates under Swiss FADP. Switzerland has:

- EU adequacy status for GDPR

- Independence from US intelligence sharing (not Five Eyes, not Nine Eyes)

- Strong data protection tradition

- No US CLOUD Act exposure

For European enterprises needing GDPR compatibility without US jurisdiction exposure, Swiss infrastructure provides a path.

Pricing Deep Dive

Vertex AI Pricing (February 2026):

Gemini Models:

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context |

|---|---|---|---|

| Gemini 1.5 Pro | $1.25 | $5.00 | 1M tokens |

| Gemini 1.5 Flash | $0.075 | $0.30 | 1M tokens |

| Gemini 2.0 Flash | $0.10 | $0.40 | 1M tokens |

Additional Vertex Costs:

- Training compute: $2-8 per TPU/GPU hour

- Prediction compute: $0.03-0.10 per 1,000 predictions

- Storage: $0.02-0.04 per GB/month

- Egress: $0.08-0.12 per GB

- Vertex AI Pipelines: $0.03 per pipeline run

PremAI Pricing:

- Transparent per-seat or usage model

- Self-hosted: Your infrastructure only

- No token markup

- Fine-tuning infrastructure included

When Vertex makes sense on cost:

- Low-to-moderate volume (under 2M tokens/day)

- Need Gemini specifically (no open alternative acceptable)

- Already committed to GCP infrastructure

- ML tooling value offsets costs

- Team doesn’t have ML infrastructure expertise

When PremAI makes sense on cost:

- High volume (over 2M tokens/day)

- Open models meet quality requirements

- Multi-cloud or cloud-agnostic strategy

- On-prem infrastructure available or cost-effective

- Want predictable costs without per-token charges

Fine-Tuning Comparison

Vertex AI Fine-Tuning:

Available for:

- Gemini 1.5 Pro/Flash

- PaLM 2 models

- Open models in Model Garden (Llama, Mistral)

Process:

- Upload training data to GCP

- Submit fine-tuning job

- Google trains on their infrastructure

- Deployed model available via Vertex endpoints

Supports supervised tuning and RLHF (for some models).

PremAI Fine-Tuning:

Available for any open model.

Methods:

- Full fine-tuning

- LoRA (parameter-efficient)

- QLoRA (quantized, most efficient)

Process:

- Upload seed examples (as few as 50)

- Autonomous data augmentation expands training set

- Training runs on your infrastructure

- Model deploys via same API

The key difference:

Vertex: Your training data goes to Google. Training happens on their infrastructure.

PremAI: Your training data stays local. You control the entire process.

For sensitive data (healthcare records, financial documents, legal files, proprietary content), this distinction matters for compliance and risk management.

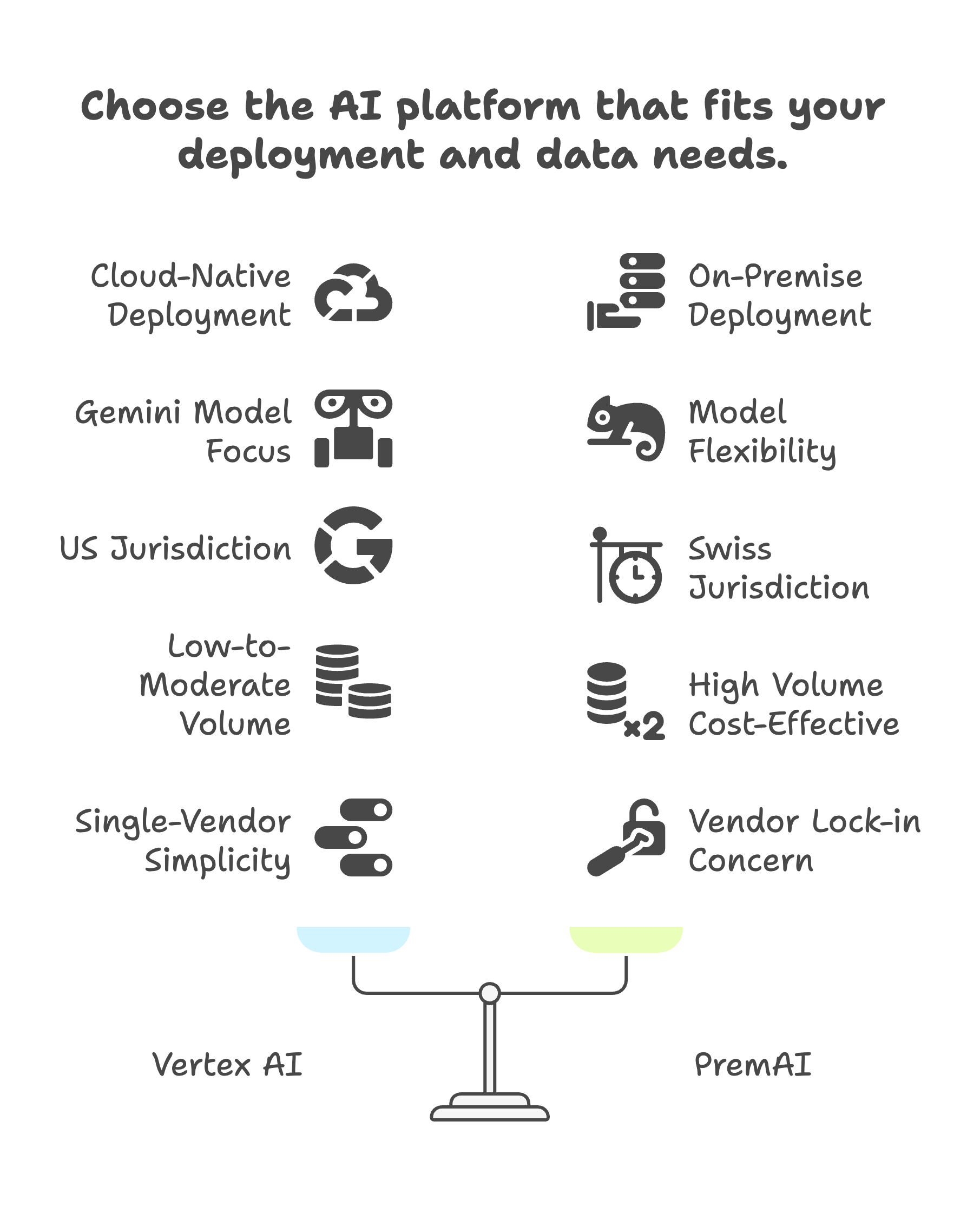

When to Choose Each

Choose Vertex AI if:

- You’re a GCP shop with existing infrastructure and skills

- You need Gemini models specifically (1M token context, specific capabilities)

- ML tooling matters (AutoML, Pipelines, Studio)

- BigQuery integration is valuable

- On-prem is NOT a requirement

- US jurisdiction is acceptable

- Volume is low-to-moderate

- You want single-vendor simplicity with Google

Choose PremAI if:

- On-premise deployment is required

- Air-gapped deployment is required

- Multi-cloud or cloud-agnostic strategy

- Data sovereignty is critical (not just residency)

- High volume makes self-hosting cost-effective

- Model flexibility needed (not locked to Gemini)

- Training data can’t leave your infrastructure

- Vendor lock-in is a concern

- Swiss jurisdiction fits better than US

The reality:

Vertex AI is excellent for cloud-native GCP teams who don’t need on-prem. It has strong ML tooling, good model selection, and deep Google ecosystem integration.

PremAI fills the gap Vertex cannot fill: true on-premise AI infrastructure with the same capabilities.

Both are legitimate choices. Match to your requirements.

Making the Decision

Google Vertex AI offers excellent ML tooling, Gemini models with industry-leading context length, and deep GCP integration. For cloud-native teams on Google Cloud with no on-prem requirements, it’s worth evaluating.

But Google offers no on-premise option.

If your requirements include:

- On-premise deployment

- Air-gapped environments

- Data sovereignty (not just residency)

- Cloud-agnostic architecture

- Control over training data

- Cost efficiency at scale

Then you need an alternative.

PremAI provides what Vertex cannot: AI infrastructure that runs where you need it, not just where Google hosts it.

Book a technical call to discuss your architecture requirements.

FAQs

Q: Can I run Gemini on-premise through any Google offering?

No. There is no on-premise path for Gemini. Google Distributed Cloud and Anthos provide on-prem Kubernetes but do not include Vertex AI services or Gemini model access.

Q: Is Model Garden an alternative to on-prem deployment?

Model Garden provides access to 150+ models, including Llama and Mistral. But deployment is to GCP infrastructure, not your infrastructure. It doesn’t solve the on-prem requirement.

Q: How does Vertex AI’s 1M token context compare to open models?

Gemini 1.5 Pro’s 1M token context is industry-leading. Open models typically max out at 128K (Llama 3.3, Mistral). For very long document processing, Gemini has a unique advantage. For most enterprise use cases, 128K is sufficient.

Q: Can I use Vertex for training and self-host for inference?

Technically yes, but you lose integration benefits and your training data still goes to Google. Consider whether this hybrid approach actually solves your requirements.

Q: What’s the total cost of ownership comparison?

At low volume: Vertex is cheaper considering ops overhead.

At high volume (>2M tokens/day): Self-hosted is 50-70% cheaper.

Factor in team capabilities, infrastructure availability, and opportunity cost of setup time.

Q: Does PremAI support Gemini access?

Yes, through API integration. You can use Gemini via Google’s API while using PremAI for other models or on-prem workloads. This hybrid approach captures Gemini’s unique capabilities while keeping other workloads private.

Q: Is Anthos a path to on-prem Vertex AI?

No. Anthos provides GKE (Kubernetes) on-premises. It does not include Vertex AI services. You can run containers on Anthos, but not Vertex AI capabilities.

Q: How do BigQuery ML capabilities compare?

BigQuery ML is genuinely useful for GCP shops. Running ML directly on your data warehouse without moving data is valuable. PremAI doesn’t replicate this. If BigQuery ML is critical, factor that into your decision.

Q: What about latency for real-time applications?

Self-hosted can achieve lower latency because you control infrastructure placement. Vertex AI latency depends on region and load. For latency-sensitive applications (trading, real-time recommendations), test both approaches.

Q: Can I migrate from Vertex AI to self-hosted later?

Yes, if you use open models from Model Garden and maintain abstraction layers. If you’re using Gemini specifically, migration means switching models. Test alternatives before committing.

Further reading: On-Premise AI Architecture | AI Data Residency Requirements | Private LLM Deployment | Prem Studio