PrivateGPT vs Prem AI: Which Private AI Platform Is Enterprise-Ready? (2026)

Compare PrivateGPT and Prem AI for enterprise private AI deployment. Covers fine-tuning, RAG capabilities, compliance certifications, pricing, and deployment options for 2026.

PrivateGPT and Prem AI both promise private AI that keeps data under your control. But they solve different problems. PrivateGPT is an open-source RAG pipeline for document Q&A.

Prem AI is an enterprise platform for fine-tuning, evaluating, and deploying custom models. The right choice depends on whether you need to query existing documents or build AI models trained on your proprietary data.

92% of data privacy concerns now drive AI infrastructure decisions according to recent enterprise surveys. Both platforms address this by keeping data local.

The similarity ends there. PrivateGPT launched in May 2023 and has accumulated 57,000+ GitHub stars. Prem AI raised $19.5M and built compliance certifications (SOC 2, HIPAA, GDPR) into the platform from day one.

This comparison breaks down what each platform actually does, where they overlap, and which fits different enterprise requirements.

Quick Comparison: PrivateGPT vs Prem AI

| Feature | PrivateGPT | Prem AI |

|---|---|---|

| Primary Function | Document Q&A via RAG | Fine-tuning + deployment platform |

| License | Apache 2.0 (open source) | Commercial (enterprise) |

| Fine-Tuning | Not included | 30+ base models, autonomous fine-tuning |

| RAG Pipeline | Core feature (LlamaIndex-based) | Available, but not primary focus |

| Model Selection | BYO via Ollama, vLLM, OpenAI | Mistral, Llama, Qwen, Gemma, and 30+ others |

| Compliance | None built-in | SOC 2, HIPAA, GDPR, Swiss FADP |

| Enterprise Support | Via Zylon (commercial arm) | Included |

| Deployment | Self-hosted (Docker, bare metal) | Self-hosted, AWS VPC, on-premise |

| Pricing | Free (open source) | Usage-based via AWS Marketplace |

| Funding | Zylon: $3.2M Pre-Seed | $19.5M total raised |

| Best For | Document Q&A, developers, prototyping | Enterprise AI customization, regulated industries |

What Is PrivateGPT?

PrivateGPT is an open-source project that lets you chat with your documents using local LLMs. No data leaves your machine.

The project hit #1 on GitHub trending multiple times since its May 2023 launch and now has 57,000+ stars with 7,600+ forks.

The architecture wraps a RAG (Retrieval Augmented Generation) pipeline with a FastAPI backend and Gradio UI. You ingest documents, the system chunks and embeds them into a vector database, then you query using natural language. The LLM retrieves relevant chunks and generates responses grounded in your documents.

Core capabilities:

Document ingestion handles PDF, Word, plain text, and other formats. The system parses, splits, and generates embeddings automatically.

OpenAI-compatible API means existing code works with minimal changes. You can swap PrivateGPT for OpenAI endpoints without rewriting your application.

LlamaIndex foundation provides the RAG abstractions. This makes it relatively easy to customize retrieval strategies, chunk sizes, and embedding models.

Multiple LLM backends support Ollama, llama.cpp, vLLM, OpenAI, Azure OpenAI, Gemini, and SageMaker. Pick whatever fits your infrastructure.

Deployment options:

PrivateGPT runs anywhere you can run Python and Docker. The project includes Dockerfiles for CPU-only and GPU-accelerated setups. A 2025 community survey found 64% of successful implementations use dedicated GPU acceleration while 36% run CPU-only with longer processing times.

The Zylon connection:

Zylon is the commercial company behind PrivateGPT. They raised $3.2M in Pre-Seed funding led by Felicis in early 2024. Zylon offers an enterprise-ready version with managed deployment, collaboration features, and support. The open-source project remains free and actively maintained.

What Is Prem AI?

Prem AI is a Swiss-based enterprise platform for building custom AI models. Where PrivateGPT focuses on RAG, Prem AI focuses on fine-tuning. You upload your data, train models on your specific use case, and deploy them to your infrastructure.

The company raised $19.5M total ($14M Strategic Seed in April 2024, $5.51M additional in November 2025). CEO Simone Giacomelli previously co-founded SingularityNET, and advisor David Maisel founded Marvel Studios. The platform processes 10M+ documents securely across 15+ enterprise clients.

Core capabilities:

Prem Studio is the main product. It covers the full model lifecycle:

- Datasets module for data upload, PII redaction, and synthetic data augmentation

- Fine-tuning across 30+ base models including Mistral, Llama, Qwen, and Gemma

- Autonomous fine-tuning system that runs up to 6 concurrent experiments

- LLM-as-a-judge evaluation with side-by-side model comparisons

- One-click deployment to AWS VPC or on-premise infrastructure

The fine-tuning difference:

This is the key distinction. PrivateGPT queries documents using an existing model. Prem AI trains models on your data. A fine-tuned model learns your terminology, understands your workflows, and generates responses tailored to your domain.

For example: A law firm using PrivateGPT can query their contract library. The same firm using Prem AI can train a model that writes contracts in their specific style, understands their clause preferences, and knows their client terminology.

Compliance architecture:

Prem AI built compliance into the platform from the start:

- SOC 2 Type II certified

- HIPAA compliant

- GDPR compliant

- Swiss jurisdiction under Federal Act on Data Protection (FADP)

- Cryptographic proofs for every interaction

- Zero data retention

- Hardware-signed attestations for privacy auditing

Detailed Feature Comparison

Document Handling and RAG

PrivateGPT excels here. RAG is its entire purpose. The LlamaIndex-based pipeline handles ingestion, chunking, embedding, storage, and retrieval. You get query modes (single question), chat modes (conversational), and chunk retrieval for advanced use cases. Vector database options include Qdrant (default), Postgres, Chroma, Milvus, and Clickhouse.

A 2024 security audit found 23% of PrivateGPT deployments had misconfigured access controls. This matters because open-source means you handle security yourself.

Prem AI supports RAG but positions it as one component of a larger platform. You can build RAG applications using fine-tuned models, combining retrieval with domain-specific model knowledge. The approach is: fine-tune first for baseline performance, then add RAG for current documents.

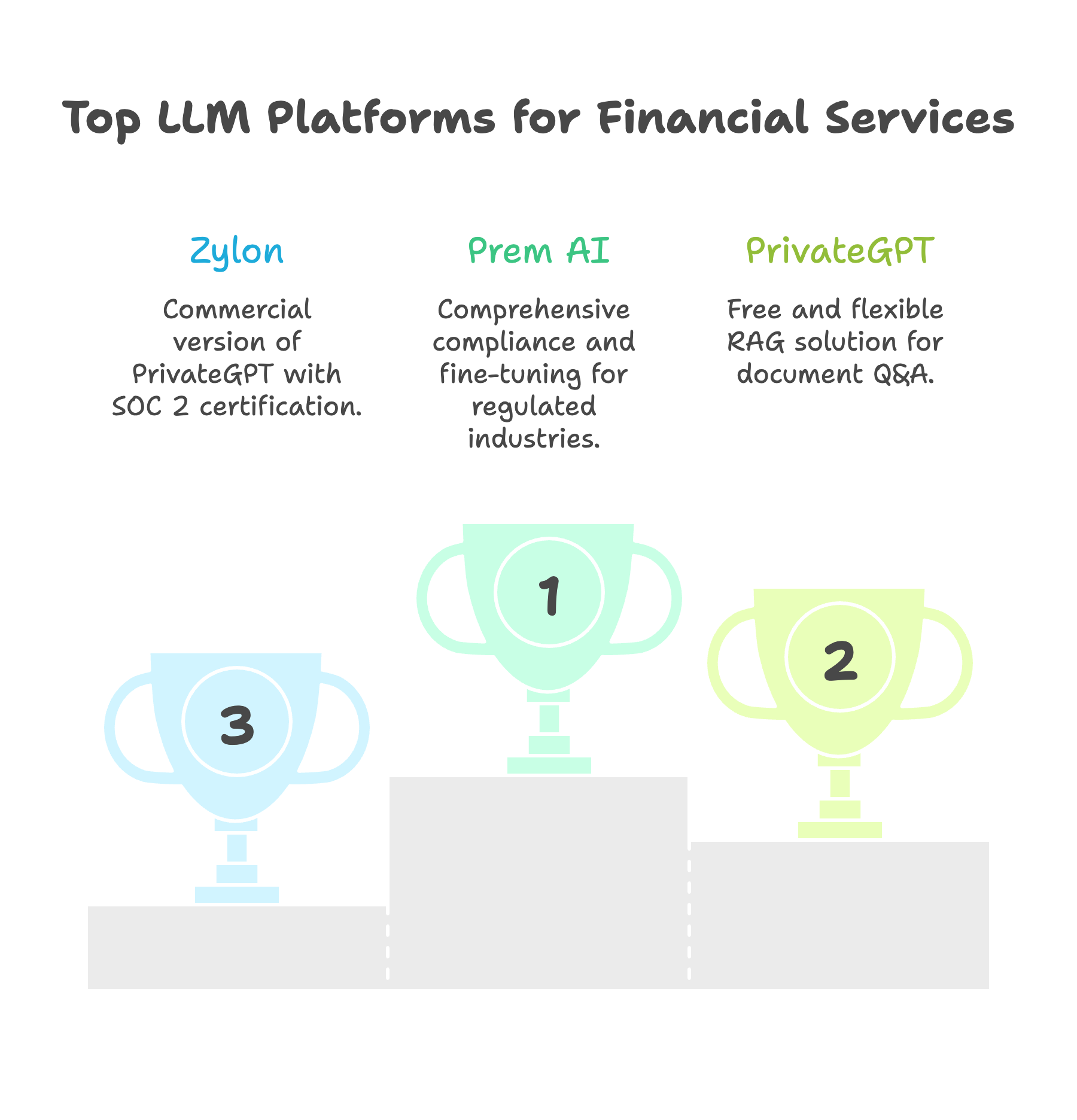

Winner for RAG-only use cases: PrivateGPT. It's purpose-built for document Q&A and free.

Fine-Tuning and Model Customization

PrivateGPT does not include fine-tuning. You use whatever models are available through Ollama, Hugging Face, or other sources. If you need a custom model, you build that pipeline separately.

Prem AI is built around fine-tuning. The autonomous fine-tuning system analyzes your data, recommends base models, and runs experiments automatically. You upload data, configure parameters through a UI, and the platform handles distributed training, hyperparameter optimization, and evaluation.

Key fine-tuning features:

- LoRA and full fine-tuning options

- Synthetic data augmentation when you have limited training examples

- Up to 6 concurrent experiments per job

- Model checkpoints downloadable in standard formats (ZIP with full weights)

- Support for GGUF and SafeTensors export

The Prem-1B-SQL model demonstrates this approach. They fine-tuned a 1.3B parameter DeepSeek model on 50K synthetic samples for Text-to-SQL tasks. The model has 10K+ monthly downloads on Hugging Face and competes with models 50x its size.

Winner for fine-tuning: Prem AI. PrivateGPT doesn't compete here.

Enterprise Compliance

PrivateGPT is open source with no built-in compliance. You configure everything yourself. The project provides the tools but not the certifications. Your security team must implement access controls, audit logging, and encryption according to your requirements.

For enterprises needing compliance, Zylon offers a commercial version with SOC 2 certification and enterprise support. Pricing requires a demo call.

Prem AI comes with compliance baked in:

- SOC 2 Type II: Annual third-party audits verify security controls

- HIPAA: Business Associate Agreement available, platform designed for PHI

- GDPR: Data processing agreements, right to deletion, residency controls

- Swiss FADP: Stronger privacy protections than US jurisdiction

The cryptographic verification approach ("don't trust, verify") provides hardware-signed attestations for every interaction. This creates an auditable trail that compliance teams can use for regulatory requirements.

Winner for regulated industries: Prem AI. The compliance infrastructure is substantial.

Deployment Flexibility

PrivateGPT deploys anywhere you can run containers. The project includes Docker configurations for various setups. You manage infrastructure, scaling, and monitoring. This flexibility appeals to teams with existing DevOps capabilities.

Prem AI offers multiple deployment paths:

- AWS VPC deployment with data isolation

- On-premise installation for complete data control

- AWS Marketplace for simplified procurement

- Air-gapped deployment for disconnected environments

The platform includes a Kubernetes operator (prem-operator) for enterprise-scale deployments. vLLM, Ollama, and NVIDIA NIM integration options exist for inference.

Winner: Depends on your team. PrivateGPT gives maximum flexibility. Prem AI provides managed enterprise infrastructure.

Pricing and Total Cost

PrivateGPT is free. Apache 2.0 license. Download, deploy, done. Your costs are:

- Infrastructure (GPUs, servers, storage)

- Engineering time for setup and maintenance

- Security implementation and compliance work

A rough estimate: Running a 7B model locally costs about $10K/year in infrastructure (H100 spot pricing at $1.65/hour, 70% utilization). Add engineering time for setup, monitoring, and security.

Prem AI uses usage-based pricing through AWS Marketplace. Enterprise tiers include custom support, reserved compute, and volume discounts. No public pricing page. Contact sales for quotes.

The ROI argument: Organizations processing 500M+ tokens monthly typically hit breakeven between cloud APIs and self-hosted deployment within 12-18 months. After that, cost reduction of 50-70% is common.

Winner for cost-conscious prototyping: PrivateGPT.

Winner for enterprise TCO: Depends on volume and compliance requirements.

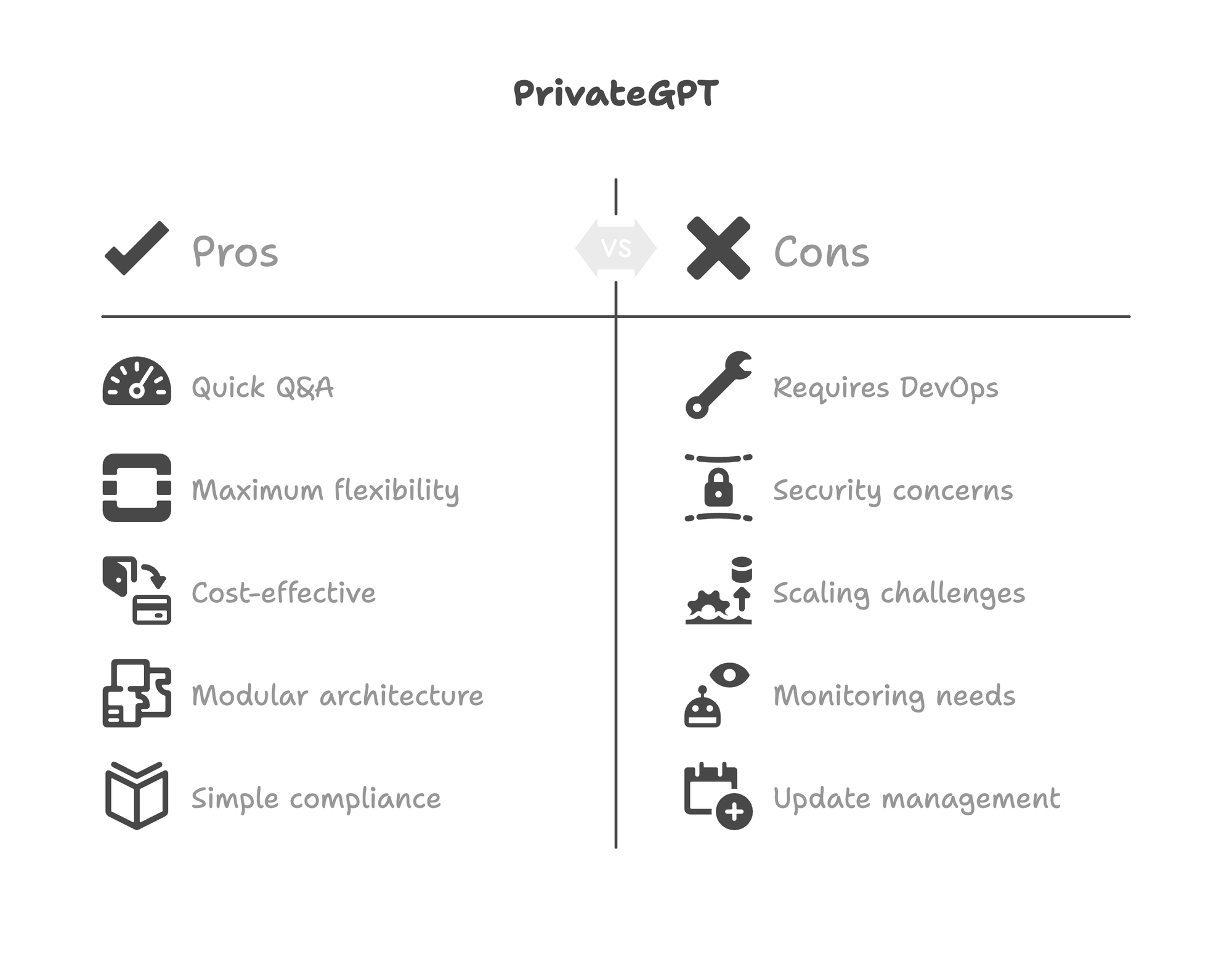

When to Choose PrivateGPT

PrivateGPT fits specific scenarios:

You need document Q&A without model customization. If your goal is querying existing documents and generic models work well enough, PrivateGPT delivers this quickly. Upload documents, ask questions, get answers grounded in your content.

Your team has strong DevOps capabilities. Open source means managing everything yourself. Security, scaling, monitoring, updates. If you have the team for this, you also get maximum flexibility.

Budget constraints prevent commercial solutions. Free matters. For startups, research teams, or proof-of-concept work, PrivateGPT removes cost barriers.

You're building a larger system and need components. PrivateGPT's modular architecture lets you plug pieces into custom pipelines. The LlamaIndex abstractions make it easy to swap components.

Compliance is not a current requirement. If your data isn't regulated and you don't need audit trails, open source simplicity wins.

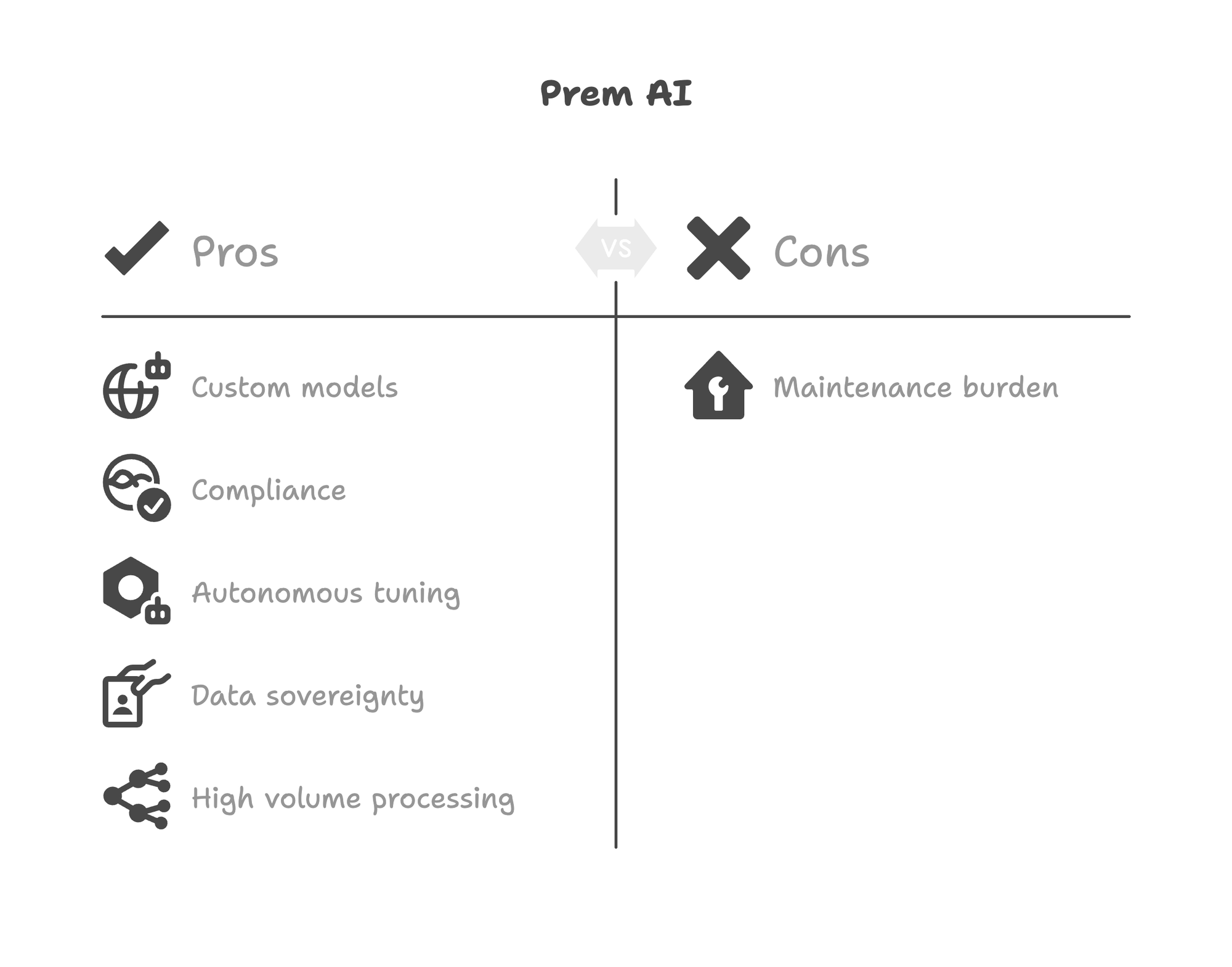

When to Choose Prem AI

Prem AI fits different scenarios:

You need custom models trained on proprietary data. Fine-tuning creates models that understand your specific domain. This produces better results than RAG alone for many enterprise applications.

Compliance is mandatory. SOC 2, HIPAA, GDPR requirements mean you need a platform with certifications. Building this yourself is expensive and slow.

You lack dedicated ML engineering resources. The autonomous fine-tuning system handles what would otherwise require specialized expertise. Data scientists can focus on use cases rather than infrastructure.

Data sovereignty is critical. Swiss jurisdiction, cryptographic verification, and zero retention architecture provide stronger privacy guarantees than US-based alternatives.

You're processing high volumes. At scale, the economics favor owned infrastructure. Prem AI handles enterprise volumes while maintaining performance (sub-100ms latency, 99.98% uptime claims).

You need production support. Enterprise support, SLAs, and managed updates matter when AI is business-critical.

Can You Use Both?

Yes. Some organizations combine both platforms:

Prototyping with PrivateGPT, production with Prem AI. Test document Q&A concepts quickly with PrivateGPT. When ready for production, move to Prem AI for compliance and scale.

RAG pipeline on PrivateGPT, custom models on Prem AI. Use PrivateGPT for general document queries. Use Prem AI for specialized tasks requiring fine-tuned models.

Different teams, different needs. Developer teams might prototype with PrivateGPT while enterprise deployments run on Prem AI.

The key insight: These platforms solve different problems. PrivateGPT queries documents. Prem AI builds custom models. Overlap exists, but primary use cases differ.

Real-World Deployment Considerations

Before choosing either platform, evaluate these factors:

Hardware requirements matter. A 2025 survey found 43% of PrivateGPT performance issues stemmed from improper environment configuration rather than hardware limitations. Both platforms need appropriate GPU resources for production workloads.

Fine-tuning changes the game. A fine-tuned 7B model often outperforms a generic 70B model on domain-specific tasks. This affects how you think about model selection and infrastructure sizing.

Security configuration is your responsibility. Even with Prem AI's compliance certifications, proper implementation matters. No platform makes you HIPAA compliant automatically. Configuration, training, and process must align with regulatory requirements.

Exit strategy planning. PrivateGPT uses standard formats and open-source components. Prem AI exports models in GGUF and SafeTensors formats. Both avoid lock-in, but verify export capabilities for your specific use case.

Frequently Asked Questions

Is PrivateGPT truly private?

Yes, when properly configured. All processing happens locally. No data is sent to external servers unless you configure OpenAI or other cloud backends. The Apache 2.0 license lets you audit the code. However, security depends on your implementation. Misconfigured deployments can expose data.

Can Prem AI replace PrivateGPT for document Q&A?

Yes, but it's not the primary use case. Prem AI can build RAG applications, but the platform optimizes for fine-tuning workflows. If document Q&A is your only need and you don't require compliance, PrivateGPT is simpler and free.

What's the learning curve for each platform?

PrivateGPT requires Python knowledge, Docker familiarity, and DevOps skills for production deployment. Prem AI abstracts much of this complexity through a UI-driven workflow. Non-ML teams often find Prem AI more accessible for production use.

How do they handle sensitive data differently?

PrivateGPT keeps data local but provides no built-in PII handling. You implement data protection yourself. Prem AI includes automatic PII redaction at the dataset level, stripping sensitive information before training data touches models. Zero data retention architecture means nothing persists after processing.

Which has better model quality?

Different question. PrivateGPT uses whatever models you provide. Quality depends on your model selection. Prem AI fine-tunes models specifically for your use case, potentially achieving better domain-specific performance. For generic tasks, quality is comparable. For specialized tasks, fine-tuning typically wins.

Conclusion

PrivateGPT and Prem AI serve different purposes despite both addressing data privacy.

Choose PrivateGPT if you need document Q&A, have DevOps capabilities, and want free open-source software. It's the fastest path to private document chat for developers and teams with technical depth.

Choose Prem AI if you need custom models, require compliance certifications, or lack dedicated ML engineering. The platform handles fine-tuning complexity while providing enterprise-grade security and support.

Many organizations will use PrivateGPT for prototyping and Prem AI for production. Others will commit to one platform based on their primary use case. The worst choice is picking based on marketing rather than requirements. Match the tool to your actual workflow.

For teams evaluating private AI platforms, start with the fundamental question: Do you need to query existing documents or build custom models? Answer that first. Platform selection follows naturally.

- Self-Hosted LLM Guide - Deployment options for private infrastructure

- What Is a Private AI Platform? - Understanding self-hosted AI options

- Enterprise AI Fine-Tuning: From Dataset to Production Model - Step-by-step fine-tuning guide

- PremAI vs Together AI: On-Premise Fine-Tuning Guide - Cloud vs self-hosted comparison

- Air-Gapped AI Solutions - Disconnected deployment options

- 15 Hugging Face Alternatives for Private AI - Broader platform comparison