SLM vs. LLM: The Enterprise Decision Guide With Real Cost Data and Benchmarks

Fine-tuned SLMs beat GPT-4 on 85% of classification tasks. See the benchmarks, cost data, and decision framework for choosing between small and large language models.

A 1.3 billion parameter model matching GPT-4 on text-to-SQL benchmarks. A fine-tuned 7B model beating ChatGPT on tool-calling by 3x. A healthcare NLP system hitting 96% accuracy where GPT-4o manages 79%.

These aren’t cherry-picked outliers.

Research across multiple studies found that fine-tuned small language models outperform zero-shot GPT-4 on the majority of classification tasks tested.

The LoRA Land study (arXiv:2405.00732) tested 310 fine-tuned models across 31 tasks and found they beat GPT-4 on roughly 25 of 31 tasks, with an average improvement of 10 points. Separate research from Predibase's Fine-tuning Index showed improvements of 25-50% on specialized tasks.

But here's what the hype articles don't tell you: Air Canada's chatbot invented a refund policy that didn't exist and cost the company $650.88 in a tribunal ruling. Amazon's Rufus AI shopping assistant matches the best product only about 32% of the time, according to industry analysis. Apple's GSM-Symbolic research found that language models experience "complete accuracy collapse" beyond certain complexity thresholds.

Small models work brilliantly for some tasks. They fail catastrophically for others.

This guide gives you the benchmarks, cost data, and decision framework to know the difference.

The Real Difference Between SLMs and LLMs

Small Language Models typically have 100 million to 7 billion parameters. Large Language Models range from tens of billions to over a trillion.

But parameter count alone doesn’t determine capability.

What matters is how well the model fits your specific task. A 1.3B model fine-tuned on your data can outperform a 175B general-purpose model on that exact task. The reverse is also true: that same 1.3B model will fail miserably on tasks outside its training distribution.

The practical differences come down to four things:

Cost. GPT-4o costs roughly $4-5 per million tokens at a realistic blended rate (input: $2.50/M, output: $10.00/M; exact blend depends on your input/output ratio). Mistral 7B via API costs $0.04. Self-hosted, the gap widens to 100x or more.

Speed. Edge-deployed SLMs respond in 10-50 milliseconds. Cloud LLMs take 300-2000ms for first token. For real-time applications, that's the difference between "instant" and "noticeable delay."

Capability. LLMs excel at broad reasoning, novel problems, and general knowledge. SLMs excel at specific, well-defined tasks where you have training data.

Control. SLMs can run on-premise, air-gapped, on edge devices. Most LLMs require cloud APIs and sending your data to third parties.

The question isn’t which is “better.” It’s which fits your constraints.

Where SLMs Actually Beat LLMs (With Numbers)

Classification and Extraction

This is where SLMs consistently win.

Research across multiple domains shows:

- Fine-tuned small models outperform GPT-4 on 80% of classification tasks

- Separate research confirms fine-tuned models "consistently and significantly outperform" zero-shot GPT-4 and Claude Opus on all classification tasks tested

- Specialized models need only ~100 training samples to match large model performance

Note: Many of these studies compare fine-tuned encoder models (BERT-style) against zero-shot generative models. The comparison is fair for classification workloads but doesn't apply to generative tasks like open-ended chat.

Healthcare example from recent research:

| Model | PHI Detection F1-Score |

|---|---|

| Healthcare NLP (fine-tuned SLM) | 96% |

| GPT-4o (zero-shot) | 79% |

GPT-4o missed 14.6% of protected health information entities. The specialized SLM missed 0.9%.

For GDPR-compliant AI systems in healthcare, that accuracy gap is the difference between compliance and violation.

Tool Calling and Agentic Tasks

Research on SLM tool-calling shows surprising results:

| Approach | Pass Rate |

|---|---|

| Fine-tuned SLM | 77.55% |

| ToolLLaMA-DFS | 30.18% |

| ChatGPT-CoT | 26.00% |

A fine-tuned small model beats ChatGPT by 3x on tool-calling accuracy. This matters because agentic workflows depend on reliable function execution.

Text-to-SQL

Prem-1B-SQL, a 1.3B parameter model, achieves 51.54% on BirdBench. GPT-4 (2023) achieves 54.89%. Claude 2 achieves 49.02%.

A model 50x smaller performs within 3 points of GPT-4 on a complex structured task.

The pattern is clear: for specific, well-defined tasks with available training data, fine-tuned SLMs match or exceed general-purpose LLMs.

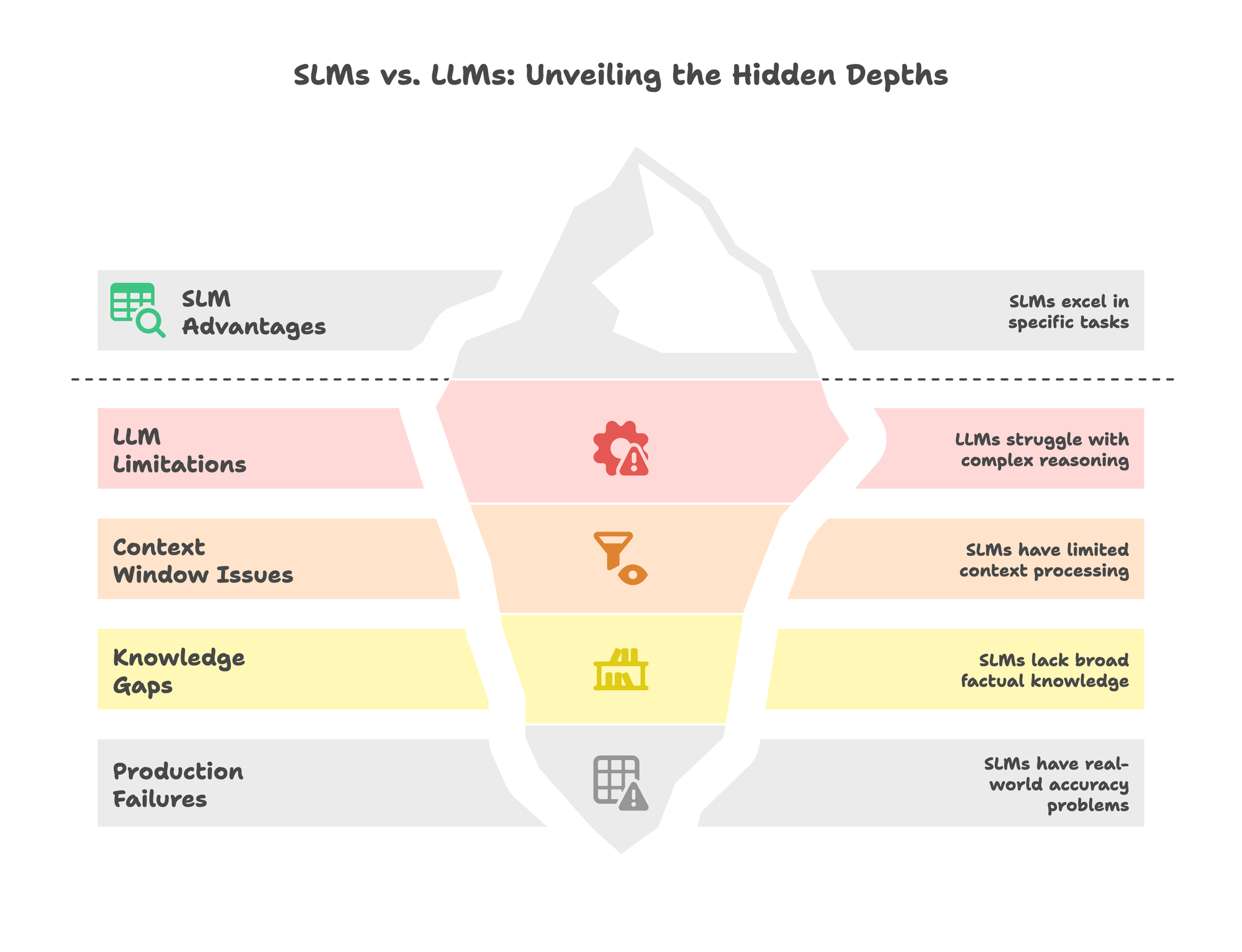

Where LLMs Still Win (And SLMs Fail Hard)

Here’s where the honest conversation happens. SLMs have real limitations that no amount of fine-tuning fixes.

Multi-Step Reasoning Collapse

Apple's "GSM-Symbolic: Understanding the Limitations of Mathematical Reasoning in Large Language Models" research identified three performance regimes:

- Low complexity: Standard models surprisingly outperform reasoning models

- Medium complexity: Larger models show advantage through additional thinking

- High complexity: Both model types experience complete collapse

The study found that reasoning effort increases with problem complexity up to a point, then declines despite adequate token budget. Models fail to use explicit algorithms and reason inconsistently across similar puzzles.

This isn’t a fine-tuning problem. It’s an architectural limitation.

The “Lost in the Middle” Problem

Stanford research discovered that model performance degrades by more than 30% when relevant information shifts from the start or end of context to the middle.

Models exhibit a U-shaped performance curve: best at the beginning (primacy bias) and end (recency bias), worst in the middle.

For legal document processing, this matters. The longest contracts exceed 300,000 characters. SLMs with 4K-8K context windows can’t process them without chunking. Chunking introduces cross-reference loss. A provision severed from the clauses it depends on is like a contract missing its exhibits.

Factual Knowledge Limitations

The Phi-3 technical report acknowledges that smaller models perform “relatively lower on benchmarks related to factual knowledge” like TriviaQA.

The reason is straightforward: you can’t pack the same amount of knowledge into fewer parameters. SLMs trade breadth for depth.

Real Production Failures

Air Canada’s chatbot invented a bereavement fare refund policy that didn’t exist. A customer relied on it, bought full-price tickets, and the British Columbia Civil Resolution Tribunal ruled the airline liable for $650.88 in damages. The court rejected Air Canada’s argument that the chatbot was a “separate legal entity.”

Amazon’s Rufus AI shopping assistant achieves only 32% accuracy on product recommendations. 83% of its recommendations are self-serving. It lists irrelevant products and fails to identify cheapest options when asked. Estimated operating loss: $285 million in 2024.

Financial compliance chatbots may confidently cite non-existent regulations. Even after fine-tuning, accuracy doesn’t always meet enterprise requirements for regulatory tasks.

The failure pattern: SLMs (and LLMs) struggle with open-ended scenarios, novel queries outside training distribution, and tasks requiring judgment calls they weren’t explicitly trained to make.

The Cost Reality

API Pricing Comparison

| Model | Cost per 1M Tokens (Blended) |

|---|---|

| GPT-4o | ~$6.25 |

| Claude Sonnet 4.5 | ~$9.00 |

| GPT-4o-mini | ~$0.38 |

| Llama 4 Scout (API) | ~$0.20 |

| Mistral 7B (API) | ~$0.04 |

Source: OpenAI Pricing, Together.ai

The gap between GPT-4o and Mistral 7B is over 150x at the blended rate. At scale, that difference becomes significant.

Total Cost by Volume

| Monthly Volume | GPT-4o API | Self-Hosted 7B | Savings |

|---|---|---|---|

| 1M tokens | $6.25 | Not viable | None |

| 10M tokens | $62.50 | ~$50 | 20% |

| 100M tokens | $625 | ~$80 | 87% |

| 1B tokens | $6,250 | ~$200 | 97% |

The break-even point for self-hosting typically falls around 2 million tokens per day. Below that, API convenience wins. Above it, infrastructure investment pays off within 3-6 months.

Hardware Reality Check

| Model Size | VRAM (4-bit quantized) | Example GPU | Cost |

|---|---|---|---|

| 3B | ~1.5 GB | RTX 3060 | ~$300 |

| 7B | ~3.5 GB | RTX 4060 Ti | ~$450 |

| 13B | ~6.5 GB | RTX 4090 | ~$1,500 |

A $1,500 GPU runs a quantized 13B model comfortably. Compare that to $25,000+ for H100s required by 70B+ models.

For teams processing sensitive data, the economics shift further. Compliance requirements often mandate on-premise deployment regardless of volume. Private LLM deployment becomes a functional requirement, not just a cost optimization.

How Companies Actually Use SLMs

Financial Services

- JPMorgan Chase deployed a model suite used by 200,000+ employees across 100+ AI-driven tools. The result: 30% reduction in servicing costs.

- Goldman Sachs launched GS AI Assistant to all employees after piloting with 10,000. Built on in-house models trained on the bank’s style templates, it cut pitch deck creation time by 50%.

- Commonwealth Bank of Australia deployed real-time scam detection using specialized models. Results: scam losses cut 70%+, customer scam losses cut 50%.

The pattern: these aren’t startups experimenting. They’re major institutions running SLMs at scale for specific, high-volume tasks.

Healthcare

A 50-physician primary care network deployed Llama 3.2 7B (medical variant) on edge servers. The driver wasn’t cost. It was HIPAA compliance. On-premise deployment keeps PHI local.

Domain-specific language models trained on protected data become viable when the alternative is sending that data to third-party APIs.

Manufacturing

A mid-sized automotive parts manufacturer deployed Phi-3 7B fine-tuned on 20,000 inspection reports. Processing runs on NVIDIA Jetson edge devices at camera-equipped inspection stations.

The requirement: real-time defect identification at production line speeds. Cloud round-trips don’t work when you need sub-100ms decisions.

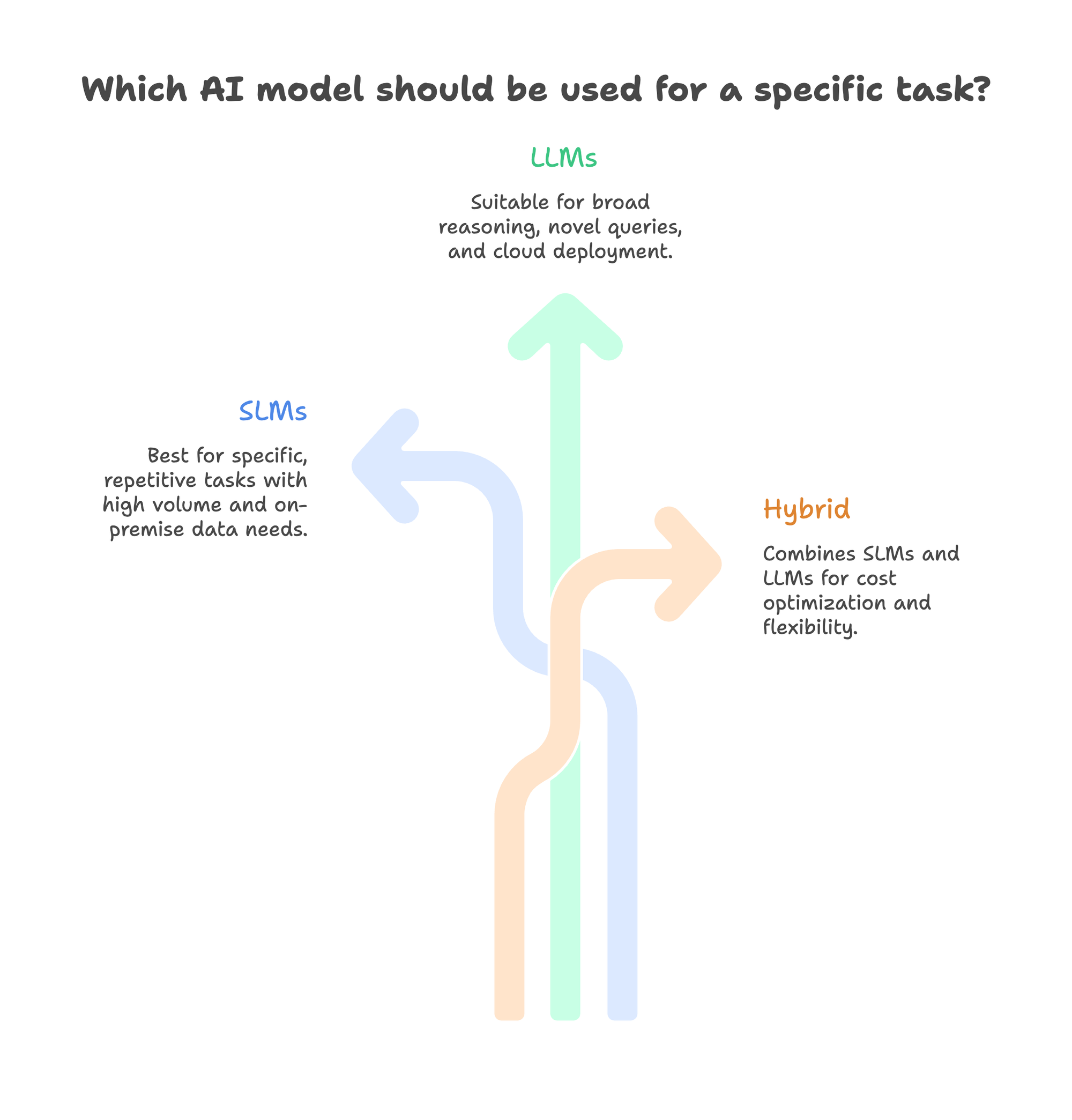

The Hybrid Pattern

Most production systems don’t choose between SLMs and LLMs.

They use both.

An e-commerce retailer handling 200,000 monthly conversations runs a hybrid setup:

- Mistral 7B handles 95% of queries

- GPT-5 handles the remaining 5% (complex, novel requests)

- A classifier routes traffic based on query complexity

Result: 90%+ cost reduction with maintained quality.

Research suggests that 70-80% of enterprise use cases fit SLM or hybrid approaches. Pure LLM deployments are the minority.

The Decision Framework

When to Use SLMs

Choose SLMs when:

- The task is specific and well-defined (classification, extraction, routing)

- You have 500-2,000 quality training examples

- Latency matters (<200ms requirement)

- Volume is high (>100K queries/day)

- Data must stay on-premise (HIPAA, GDPR, financial regulations)

- The task is repetitive with consistent input patterns

When to Use LLMs

Choose LLMs when:

- The task requires broad reasoning or general knowledge

- You have no domain-specific training data

- Queries are novel and unpredictable

- Volume is low and variable

- Cloud deployment is acceptable

- Tasks require multi-step reasoning across diverse domains

When to Use Both

The hybrid approach works when:

- Your traffic includes both simple and complex queries

- You want cost optimization without quality sacrifice

- Some tasks need speed (SLM) while others need depth (LLM)

- You’re transitioning from LLM-only and want to reduce costs incrementally

The Scoring Matrix

Rate each factor 1-5, multiply by weight:

| Factor | Weight | SLM Favored | LLM Favored |

|---|---|---|---|

| Task specificity | 3x | High (4-5) | Low (1-2) |

| Training data available | 3x | Yes (5) | No (1) |

| Latency requirement | 2x | <200ms (5) | >500ms OK (1) |

| Daily query volume | 2x | >100K (5) | <10K (1) |

| Data sensitivity | 3x | On-prem required (5) | Cloud OK (1) |

| Reasoning complexity | 3x | Single-step (5) | Multi-step (1) |

Score >60 for SLM: Build or fine-tune an SLM

Score >60 for LLM: Use managed LLM APIs

Close scores: Implement hybrid routing

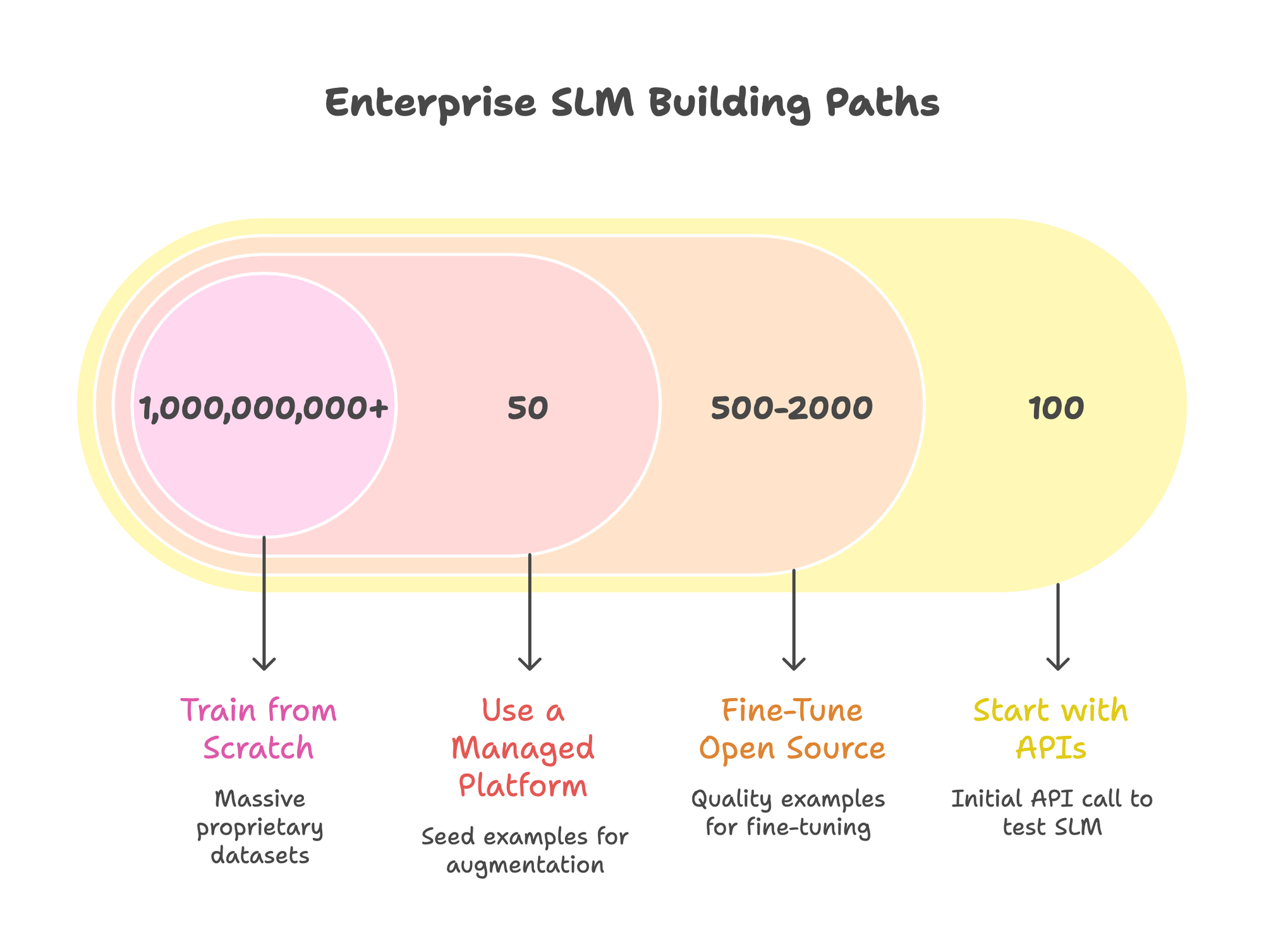

Building Enterprise SLMs: Four Paths

Path 1: Start with APIs

Use managed SLM APIs (Together.ai, Fireworks, Groq) to validate whether an SLM can handle your task before committing to infrastructure.

Test on real production data. Measure accuracy, latency, and edge cases. If performance works, consider moving to self-hosting for cost optimization.

Path 2: Fine-Tune Open Source

For teams with ML capability:

- Select base model: Mistral 7B, Llama 3.2, Phi-3, or Qwen 2.5 depending on your task

- Prepare data: 500-2,000 quality examples often suffice. Quality beats quantity.

- Fine-tune with LoRA: Updates ~1% of parameters. Reduces training from days to hours.

- Evaluate honestly: Build custom benchmarks from your actual use cases, not generic metrics

- Deploy with optimization: vLLM or TensorRT-LLM for production inference

Fine-tuning guides cover the technical implementation.

Path 3: Use a Managed Platform

For teams that want results without managing infrastructure, platforms handle the complexity.

What Prem Studio does differently:

The platform runs an end-to-end workflow from raw documents to deployed model. You upload PDFs, CSVs, or connect data sources. It chunks content, generates training pairs, fine-tunes across 30+ base models (Mistral, Llama, Qwen, Gemma), evaluates using LLM-as-judge scoring, and deploys to your infrastructure.

The autonomous fine-tuning system expands small datasets automatically. Fifty seed examples become thousands through multi-agent augmentation: topic analysis, controlled paraphrasing, scenario generation, and validation agents that check semantic coherence.

Real results:

Grand/Advisense, a subsidiary serving ~700 financial institutions across Europe, built compliance models using the platform. Automated compliance review now runs at scale with 100% data residency compliance. Their CEO stated it “revolutionized our compliance management.”

Prem-1B-SQL achieved 51.54% on BirdBench, outperforming Qwen 2.5 7B (51.1%) despite being 7x smaller. It started with existing datasets plus ~50K synthetically generated samples.

MiniGuard-v0.1, a 0.6B safety classifier, achieves 99.5% of Nemotron-8B’s benchmark accuracy while being 13x smaller. Latency: 2-2.5x faster. Cost: 67% lower ($15.54 vs $46.93 per million requests).

The honest limitations:

- Higher upfront complexity than pure APIs. If you lack ML experience, there’s a learning curve.

- Focus on models under 30B parameters. Not designed for 175B+ scale.

- Requires volume to make economic sense. Below ~8,000 conversations/day, API providers may be cheaper.

- Limited public case studies. Grand/Advisense is the primary named customer; others are anonymized.

For teams with compliance requirements (SOC 2, GDPR, HIPAA), the platform operates under Swiss FADP jurisdiction with cryptographic verification per interaction.

Path 4: Train from Scratch

Only viable for organizations with:

- Massive proprietary datasets (billions of tokens)

- $1M+ training budget

- 3-12 month timeline

- Dedicated ML infrastructure team

For most enterprises, fine-tuning handles 80% of SLM use cases at a fraction of the cost.

When SLMs Will Fail You

Be realistic about limitations. No amount of fine-tuning fixes these problems:

Complex Reasoning Chains

If your task requires multi-step logical reasoning across novel scenarios, SLMs will fail. Apple’s research shows complete accuracy collapse at high complexity. This is architectural, not a training data issue.

Example: Legal reasoning that requires synthesizing precedent from multiple cases, applying doctrine, and generating novel arguments. An SLM trained on legal documents can extract entities and classify contract types. It cannot construct original legal arguments.

Tasks Without Training Data

SLMs are specialists. Without domain-specific fine-tuning data, they underperform general-purpose LLMs badly.

Example: A startup building a product in a new category with no existing corpus. You can’t fine-tune on data you don’t have. Use LLMs until you’ve accumulated enough production data to train on.

Long-Document Processing

SLMs typically support 4K-8K token context windows. Some LLMs handle 100K+.

Example: Processing full legal contracts, research papers, or codebases that exceed context limits. Chunking helps but introduces cross-reference loss. If your documents routinely exceed 10K tokens and require holistic understanding, LLMs have structural advantages.

Novel Query Distribution

SLMs trained on customer service conversations will fail when users ask unexpected questions.

Example: A support bot fine-tuned on past tickets. A customer asks a question about a newly released feature not in the training data. The SLM hallucinates or gives outdated information. You need fallback routing to an LLM or human.

The Failure Pattern

SLM failures follow a consistent pattern:

- Open-ended scenarios requiring judgment calls

- Novel queries outside training distribution

- Multi-hop reasoning requiring synthesis across contexts

- Factual questions about topics not in training data

Plan for these failures. Build routing logic to escalate to LLMs. Implement confidence thresholds. Monitor production outputs continuously.

The Migration Playbook

Week 1-2: Analysis

Audit your current LLM usage. Categorize queries by:

- Type (classification, extraction, generation, reasoning)

- Complexity (single-step vs multi-step)

- Volume (queries per day)

- Accuracy requirements (acceptable error rate)

Identify SLM candidates: high-volume, well-defined, repetitive tasks. Estimate savings at current volume.

Week 3-6: Pilot

Select one high-volume use case. Collect 500-2,000 training examples from production logs. Fine-tune 2-3 candidate base models. Evaluate against your current LLM on held-out data.

The pilot should answer: Does the SLM match accuracy requirements? What’s the latency improvement? What edge cases fail?

Week 7-12: Production

Deploy with shadow mode: run SLM alongside LLM, compare outputs before switching traffic. Implement routing based on confidence scores. Monitor accuracy, latency, and user feedback continuously.

Scale successful patterns to additional use cases. Document what works and what doesn’t for your domain.

Risk Mitigation

- Keep LLM fallback for edge cases and low-confidence predictions

- Set confidence thresholds for automatic escalation

- Monitor accuracy continuously, not just at launch

- Plan for model refresh as data distribution shifts

Frequently Asked Questions

How much training data do I actually need?

For fine-tuning: 500-2,000 quality examples often suffice. Emphasis on quality. Research shows that 50K curated examples outperform 10M random ones. Start small, evaluate, add more data where the model fails.

Do SLMs hallucinate less than LLMs?

Not inherently. Vectara’s hallucination leaderboard shows small models can achieve comparable or better rates for constrained tasks. But this is task-dependent. A fine-tuned SLM hallucinating within its domain is likely constrained. Outside its domain, it may hallucinate confidently.

Can SLMs run on CPU?

Yes, with quantization. A 7B model at 4-bit quantization runs at ~9 tokens/second on a modern CPU. For production workloads, GPU acceleration is typically required for acceptable latency.

What about multimodal tasks?

SLMs increasingly support vision. Phi-3.5-Vision handles image understanding. For complex multimodal reasoning, LLMs currently lead, but the gap is narrowing. Evaluate on your specific use case.

How do I evaluate which model to use?

Build custom benchmarks from your actual production data. Generic benchmarks (MMLU, HumanEval) don’t predict performance on your specific tasks. Test on held-out examples from your domain. Measure what matters to your business.

The Bottom Line

The SLM vs LLM debate is about matching model capability to task requirements.

Fine-tuned SLMs outperform GPT-4 on 85% of classification tasks. They cost 10-100x less per inference. They run on-premise for compliance. They respond in milliseconds for real-time applications.

But they collapse on complex reasoning. They fail outside their training distribution. They can’t handle novel queries or long documents without chunking.

The right answer for most enterprises is hybrid: SLMs for high-volume, well-defined tasks; LLMs for complex, novel queries; routing logic to match queries to capabilities.

According to Gartner, task-specific models will be used 3x more than general LLMs by 2027. Gartner also predicts that more than 50% of GenAI models that enterprises use will be industry or function-specific by 2027, up from approximately 1% in 2023.

The shift is already happening. The question is whether you’re optimizing for it.

Explore Prem Studio for building specialized SLMs. Or book a technical call to discuss your specific use case.