Small Guardrail LLM: Why It Matters for Enterprise AI

As enterprise AI systems move from experimentation to production, safety guardrails are no longer optional. Every prompt, response, and agent action must be evaluated for harmful, unsafe, or policy-violating content, often in real time.

Traditionally, this safety layer has been powered by large guardrail models with billions of parameters. But a new shift is underway: small guardrail LLMs are proving that safety doesn’t need scale, it needs precision.

This article explains what the small guardrail LLM is, why it matters for enterprise AI, and how platforms like Prem AI are enabling production-ready, low-latency AI safety.

What Is a Guardrail LLM?

A guardrail LLM is a language model designed specifically to evaluate and classify User queries or AI outputs. for safety, compliance, and policy adherence.

Common guardrail tasks include:

- Detecting harmful or violent content

- Identifying hate, harassment, or discrimination

- Blocking unsafe instructions

- Enforcing brand or regulatory policies

- Preventing hallucinations in sensitive domains

Guardrail models typically sit in the critical path of AI systems, meaning they run on every request.

The Problem with Large Guardrail Models

Most existing guardrail models (e.g., 8B+ parameter classifiers) offer strong accuracy, but at a cost.

For enterprises, large guardrail LLMs introduce:

- High latency, slowing down user-facing applications

- Increased inference cost, especially at scale

- Infrastructure overhead, requiring powerful GPUs

- Poor fit for edge or high-throughput environments

In production systems like chatbots, copilots, and agent workflows, this becomes a serious bottleneck. The result: teams either accept degraded UX, or skip proper safety checks altogether.

What Is the Small Guardrail LLM?

The small guardrail LLM is a safety-focused language model designed to deliver enterprise-grade accuracy with dramatically fewer parameters.

Instead of relying on sheer model size, small guardrail LLMs focus on:

- Task-specific training

- Targeted synthetic data

- Explicit safety reasoning

- Optimized inference (e.g., FP8 / low-bit precision)

Recent research shows that guardrail tasks are pattern-heavy, not reasoning-heavy, making them ideal candidates for smaller, specialized models.

How Prem AI Approaches Small Guardrail LLMs

Prem AI focuses on production-grade AI safety, not academic benchmarks alone. Through its research and platform, Prem AI enables:

- Small, efficient guardrail models optimized for real workloads

- Custom evaluation metrics to validate safety in real use cases

- Agentic evaluation pipelines that replace manual spot checks

- Private and sovereign deployments for enterprise AI systems

Rather than relying on one-size-fits-all safety models, Prem AI allows teams to define what “safe” means for their specific application and enforce it efficiently. This approach makes the smallest guardrail LLM not just viable, but preferable for enterprise AI.

MiniGuard-v0.1 in Production

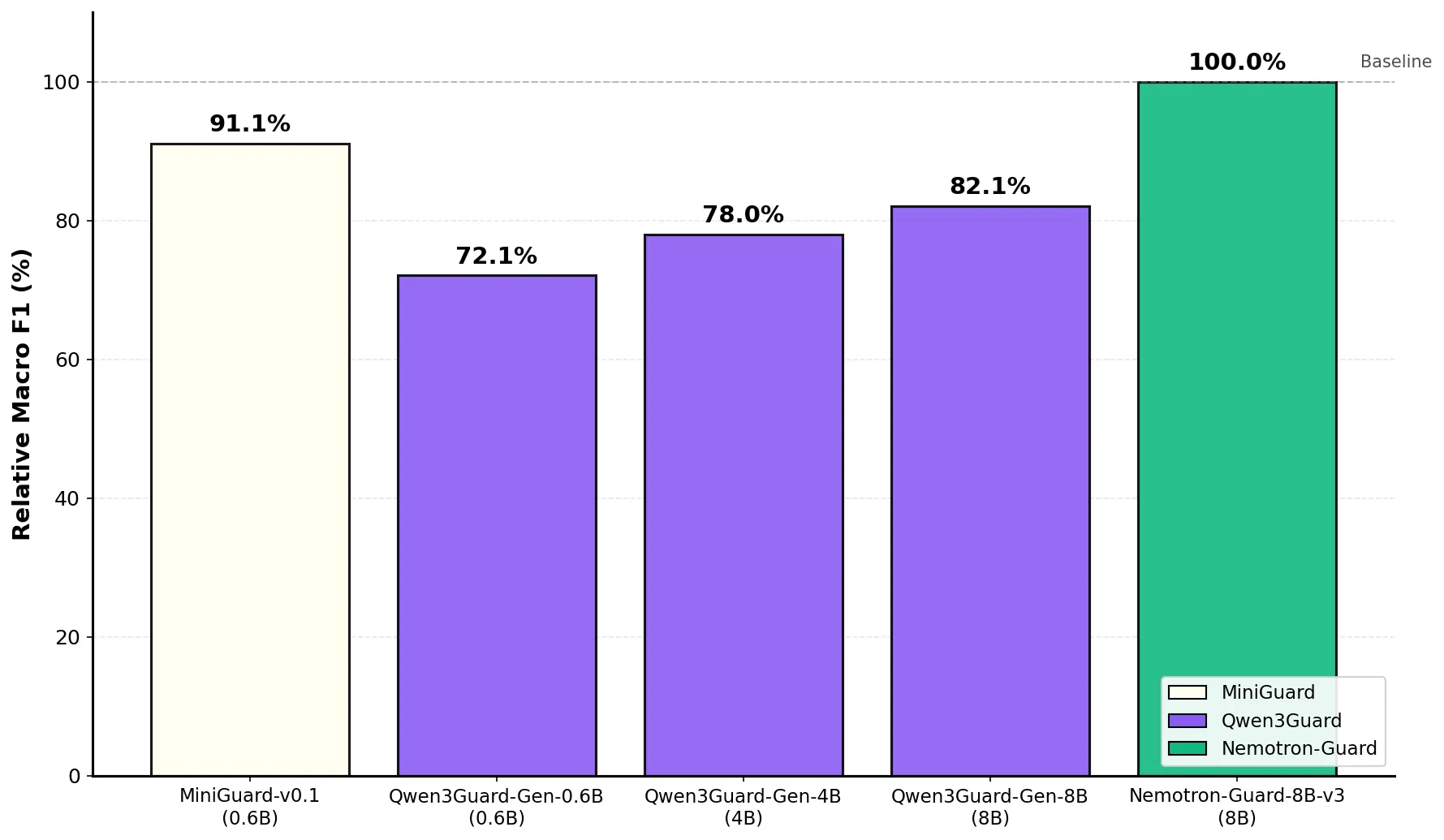

MiniGuard-v0.1, trained using Prem Studio, demonstrates that enterprise-grade AI safety does not require large guard models. In production evaluations, the 0.6B-parameter MiniGuard model delivers 91.1% of Nemotron-Guard-8B's performance while operating at a fraction of the size and cost.

Why this matters for enterprises:

- Lower infrastructure overhead: MiniGuard is 13× smaller, reducing GPU memory requirements and enabling deployment on cost-efficient hardware.

- Faster safety checks: With 4x lower latency on L40S GPU, safety classification no longer becomes a bottleneck in user-facing or agentic workflows.

- Significant cost savings: Serving costs are 67% lower per million requests, making continuous safety enforcement viable at scale.

- Production-ready performance: Despite its size, MiniGuard closely tracks large guard models on benchmark and real-world data, avoiding the tradeoff between safety and efficiency.

- Drop-in integration: Compatible with existing guard workflows, allowing teams to replace larger models without changing prompts or output handling.

For organizations running safety checks in the critical path chatbots, content moderation, or autonomous agents MiniGuard-v0.1 enables reliable AI safety without the latency and cost penalties typically associated with large guard models.

The Future of Enterprise AI Safety

As AI systems scale, safety must scale with them, without becoming a bottleneck.

The future belongs to:

- Small, specialized guardrail LLMs

- Clear, rubric-based evaluation

- Continuous safety monitoring

- Private, controllable AI infrastructure

Platforms like Prem AI are building toward this future by making safety measurable, efficient, and production-ready.

FAQs

1. What is the small guardrail LLM?

The small guardrail LLM is a compact safety-focused language model (typically under 1B parameters) designed to classify AI outputs for safety, compliance, and policy adherence with minimal latency and cost.

2. Are small guardrail LLMs accurate enough for enterprise use?

Yes. For narrow, well-defined safety tasks, small guardrail LLMs can achieve near-parity accuracy with much larger models by using targeted training data and task-specific optimization.

3. Why do enterprises need guardrail LLMs in production?

Guardrail LLMs help prevent harmful outputs, enforce compliance, reduce hallucinations, and protect brand reputation, especially in chatbots, agents, and customer-facing AI systems.

4. How do small guardrail LLMs reduce AI costs?

Smaller models require less compute, memory, and infrastructure, significantly lowering inference costs when safety checks run on every request.

5. Can small guardrail LLMs replace large models like 8B guard models?

In many enterprise scenarios, yes. For specific safety classification tasks, small guardrail LLMs can serve as drop-in replacements while offering faster performance and lower cost.

6. How does Prem AI support guardrail evaluation and deployment?

Prem AI provides tools to evaluate, fine-tune, and deploy guardrail models using custom metrics, agentic evaluation, and private infrastructure, ensuring safety works in real-world production use cases.

7. Are small guardrail LLMs suitable for private or sovereign AI deployments?

Absolutely. Their efficiency makes them ideal for on-prem, VPC, and sovereign AI environments where privacy, control, and cost efficiency are critical.

Conclusion

The rise of the small guardrail LLM marks a turning point for enterprise AI safety. Instead of relying on oversized, expensive models that slow systems down, enterprises can now deploy compact, purpose-built guardrail models that deliver strong safety performance without sacrificing speed or scalability.

By focusing on targeted data, task-specific training, and efficient inference, small guardrail LLMs prove that safety does not need scale, it needs precision. Platforms like Prem AI are accelerating this shift by making guardrail evaluation, iteration, and deployment measurable, transparent, and production-ready.

As AI adoption deepens across enterprises, the ability to enforce safety efficiently will define long-term success. And in that future, the small guardrail LLMs will play the biggest role.

For a deep dive into the technical details, architecture decisions, and benchmark results, read our release and full technical report on Hugging Face.