Small Guardrail Models for Confidential & Sovereign AI

Learn how small guardrail models protect confidential and sovereign AI systems by enforcing safety, preventing data leaks, and enabling secure, enterprise-grade private AI.

As artificial intelligence becomes embedded into the core of business, governments, and critical infrastructure, a quiet shift is happening. Organizations are no longer asking only how powerful their AI is. They are asking something far more important: who controls it.

Enterprises are handling sensitive customer data, proprietary knowledge, legal documents, financial records, and internal communications through AI systems. In this environment, sending prompts and outputs to third-party cloud APIs is no longer acceptable. The future of AI belongs to confidential and sovereign systems AI that runs under the organization’s control, inside its own infrastructure, and under its own rules.

This is where small guardrail models become essential. At PremAI, we believe guardrails are not optional add-ons. They are the foundation of trustworthy, private, and enterprise-grade AI.

What Sovereign AI Really Means

Sovereign AI is not just about hosting a model on your own server. It means that your data, your policies, and your intelligence never leave your control. No external company sees your prompts. No external service filters your outputs. No hidden model logs your conversations.

But sovereignty creates a challenge. If you remove cloud-based moderation, safety APIs, and external filters, how do you keep AI safe?

The answer is small guardrail models.

What Is a Small Guardrail Model?

A small guardrail model is a lightweight language model trained specifically to monitor, filter, and enforce rules around AI interactions. It does not generate long answers or creative text. Instead, it reads prompts and outputs and decides whether they are safe, compliant, and allowed.

It can block sensitive data, prevent prompt injection, stop hallucinations, enforce enterprise policies, and protect against misuse. Because it is small, it runs fast and cheaply. Because it is a model, it understands context, intent, and nuance, something rule-based filters will never achieve.

This combination is what makes small guardrail models perfect for confidential and sovereign AI systems.

Why Confidential AI Needs More Than Encryption

Many vendors claim they offer “secure AI” because data is encrypted in transit or stored privately. That is important, but it is not enough.

The real risk in AI is not just where data is stored. It is what the model is allowed to say and do. An LLM can accidentally reveal sensitive data, invent false information, or respond to harmful prompts. Encryption does nothing to stop that.

Guardrail models solve this by controlling behavior. They ensure that even inside a private environment, AI remains safe, compliant, and aligned with organizational rules.

How Small Guardrail Models Enable Sovereign AI

In a sovereign AI stack, everything runs inside your infrastructure: the core LLM, the vector databases, the applications, and the safety systems. The guardrail model becomes the gatekeeper.

When a user sends a prompt, the guardrail model evaluates it before it reaches the main model. If it violates policy, it is blocked or rewritten. When the main model generates a response, the guardrail checks it again to ensure it does not leak data, hallucinate, or violate rules.

This creates a closed, private loop where nothing unsafe ever leaves the system.

Why Small Models Are Ideal for This Role

Large models are expensive, unpredictable, and difficult to control. Guardrail models do not need to be large. They need to be precise.

Small models are faster, cheaper, and easier to deploy in restricted environments. They can run on CPUs or modest GPUs, making them ideal for on-prem and private cloud setups. They are easier to audit and fine-tune for specific regulatory or business requirements.

At PremAI, we design guardrail models that are small enough to be embedded anywhere but smart enough to enforce enterprise-grade safety.

Real-World Use Cases

In healthcare, small guardrail models prevent AI systems from giving unauthorized medical advice or exposing patient data. In finance, they ensure AI does not reveal sensitive financial information or violate compliance rules. In government and defense, they protect classified data and enforce strict operational boundaries.

Even in corporate environments, guardrails stop AI from leaking internal documents, customer data, or proprietary strategies. Without guardrails, even the best private model can become a liability.

Why Guardrail Models Reduce Cost

Many organizations spend more on safety than on AI itself. They rely on external moderation APIs, multiple classifiers, and human review teams. These systems are slow, expensive, and often inaccurate.

Small guardrail models replace this entire stack. One model performs prompt filtering, output moderation, policy enforcement, and hallucination detection. Because it runs locally, there are no API fees or data transfer costs. Because it blocks bad requests early, it reduces wasted LLM inference.

The result is a safer system that is also far more cost-efficient.

Why This Matters for AIO and SEO

As AI systems generate more content and interact with users, trust becomes a ranking signal. Search engines and AI agents increasingly prefer content that is accurate, safe, and reliable.

Guardrail models improve output quality by preventing hallucinations and unsafe responses. This makes AI-generated content more useful, more indexable, and more trusted by both humans and machines. In the emerging world of AI Optimization (AIO), safety is no longer optional, it is part of visibility.

PremAI’s Vision for Sovereign Guardrails

At PremAI, we build small guardrail models designed from day one for confidential and sovereign AI. They run inside your infrastructure, follow your rules, and protect your data.

Organizations shouldn't have to choose between powerful AI and private AI. With the right guardrails, you can have both.

PremAI's small, highly optimized guardrail models run directly inside customer infrastructure. They protect every interaction with core LLMs, enforcing policy, blocking risk, and preventing data leakage in real time.

Instead of sending prompts and outputs to third-party safety services, you own your guardrails. You control the rules, the data, and the deployment. This is what makes truly private and compliant AI possible.

AI is becoming a core business system. Safety can't be an external dependency, it must be built into the foundation.

MiniGuard-v0.1, trained using Prem Studio, proves that enterprise-grade safety doesn't require large models.

In production evaluations:

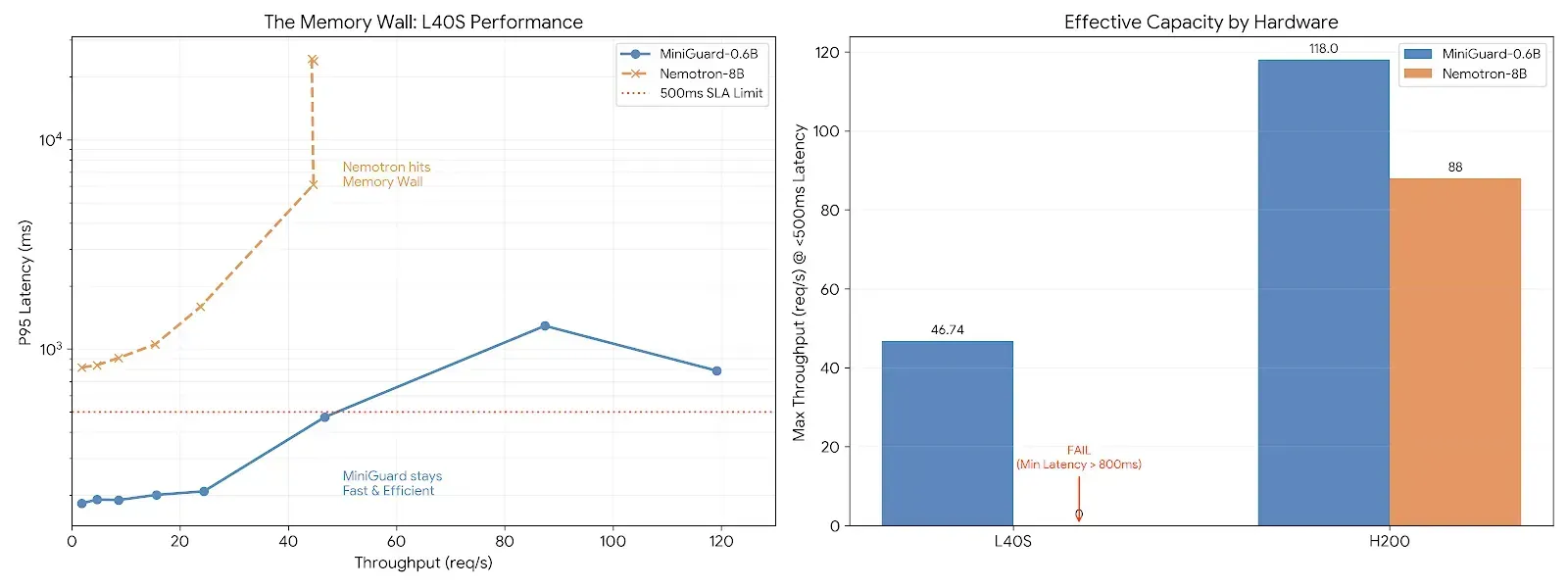

- MiniGuard-v0.1 (0.6B) delivers 91.1% of Nemotron-Guard-8B performance

- Operates at a fraction of the model size and cost

- Maintains low latency under real user interaction

Rather than increasing parameter count, MiniGuard focuses on relevance, efficiency, and production reliability. The result is a guardrail model that performs well not just on benchmarks, but in live systems.

FAQs

- What are small guardrail models?

Small guardrail models are lightweight language models designed to monitor, control, and enforce safety, compliance, and policy across AI interactions in real time. - Why are they essential for sovereign AI?

They keep all safety, moderation, and policy enforcement inside your private infrastructure, ensuring full data ownership, regulatory compliance, and zero reliance on external AI services. - Do guardrail models affect system performance?

No. Because they are small and optimized, they run extremely fast and often improve overall performance by replacing multiple external safety and moderation APIs. - Can guardrail models be customized?

Yes. They can be fine-tuned to reflect your organization’s unique rules, industry regulations, and security requirements. - Are guardrail models only useful for regulated industries? Not at all. Any organization that values data privacy, trust, and reliable AI behavior benefits from intelligent guardrail models.

Conclusion

Confidential and sovereign AI is not just about where models run. It is about who controls them and how safely they operate. Small guardrail models are the missing layer that makes private, enterprise-grade AI possible.

They protect data, enforce rules, reduce costs, and build trust. Most importantly, they give organizations ownership over their AI systems.

At PremAI, we are building the future of sovereign AI-powered by small, intelligent guardrails that keep your intelligence truly yours.