What Is Confidential AI? The Security Gap Your Encryption Doesn’t Cover

Confidential AI protects data during processing, not just at rest or in transit. Learn how TEEs, attestation, and encrypted inference secure enterprise AI workloads.

Your data is encrypted at rest. Encrypted in transit. But the moment an AI model processes it, everything sits exposed in memory.

IBM’s 2025 Cost of a Data Breach Report found that 13% of organizations experienced breaches of AI models or applications. Of those compromised, 97% lacked proper AI access controls. Healthcare breaches averaged $7.42 million per incident, taking 279 days to identify and contain.

Over 70% of enterprise AI workloads will involve sensitive data by 2026. Yet most organizations protect that data everywhere except where it matters most: during actual computation.

Confidential AI fixes this.

What Is Confidential AI?

Confidential AI uses hardware-based isolation to protect data and models while they’re being processed. Not before. Not after. During.

The core technology is called a Trusted Execution Environment, or TEE. Think of it as a vault built directly into the CPU or GPU. Data enters encrypted, gets processed inside the vault, and leaves encrypted. The operating system, hypervisor, cloud provider, and even system administrators never see plaintext.

This matters because traditional encryption has a fundamental limitation. To compute on data, you must decrypt it first. That decryption creates a vulnerability window. Memory scraping attacks, malicious insiders, compromised hypervisors. All exploit this window.

Confidential computing eliminates it entirely.

The Confidential Computing Consortium, a Linux Foundation project backed by Intel, AMD, NVIDIA, Microsoft, Google, and ARM, defines it as:

“Hardware-based, attested Trusted Execution Environments that protect data in use through isolated, encrypted computation.”

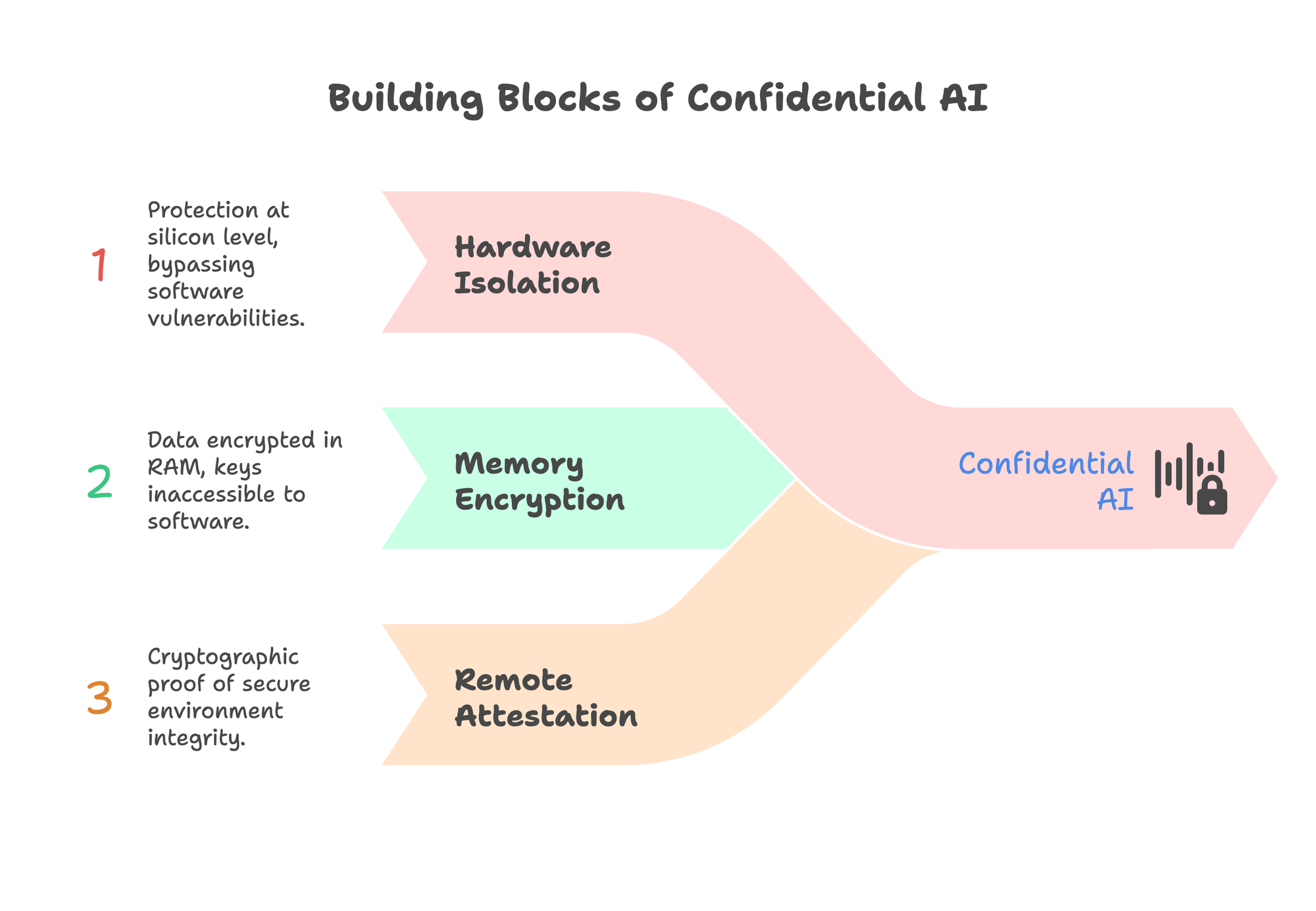

Three properties make confidential AI different from traditional security:

Hardware isolation. Protection happens at the silicon level. No software vulnerability can bypass it.

Memory encryption. Data stays encrypted even in RAM. Keys exist only inside the processor and are inaccessible to any software layer.

Remote attestation. Cryptographic proof that the secure environment is genuine, unmodified, and running expected code. You don’t have to trust the cloud provider’s word. You verify mathematically.

The Data Protection Gap Nobody Talks About

Security teams focus obsessively on two states: data at rest and data in transit.

Data at rest gets AES-256 encryption. Check.

Data in transit gets TLS 1.3. Check.

But data in use? Most organizations have no protection at all.

| Data State | Traditional Protection | Actual Status |

|---|---|---|

| At Rest | Full-disk encryption, TDE | Protected |

| In Transit | TLS/SSL, VPNs | Protected |

| In Use | None | Exposed in plaintext |

This gap exists because CPUs historically couldn’t compute on encrypted data. Applications needed raw access to memory. That architectural limitation created a vulnerability that persists across virtually every cloud deployment today.

The attack surface is larger than most teams realize:

- Malicious insiders. Cloud administrators, support staff, contractors with privileged access

- Memory scraping. Malware extracting data directly from RAM

- Cold boot attacks. Physical access to servers enabling memory extraction

- Side-channel attacks. Timing analysis, cache patterns, power traces revealing sensitive data

- Hypervisor compromises. A single vulnerability exposing every tenant on shared infrastructure

A 2025 IDC study found that 56% of organizations cite workload security and external threats as their primary driver for confidential computing adoption. Another 51% specifically mentioned PII protection.

The threat is real. The gap is measurable. And for regulated industries, it’s increasingly indefensible.

For teams evaluating private AI platforms, understanding this gap is the first step toward closing it.

How Confidential AI Actually Works

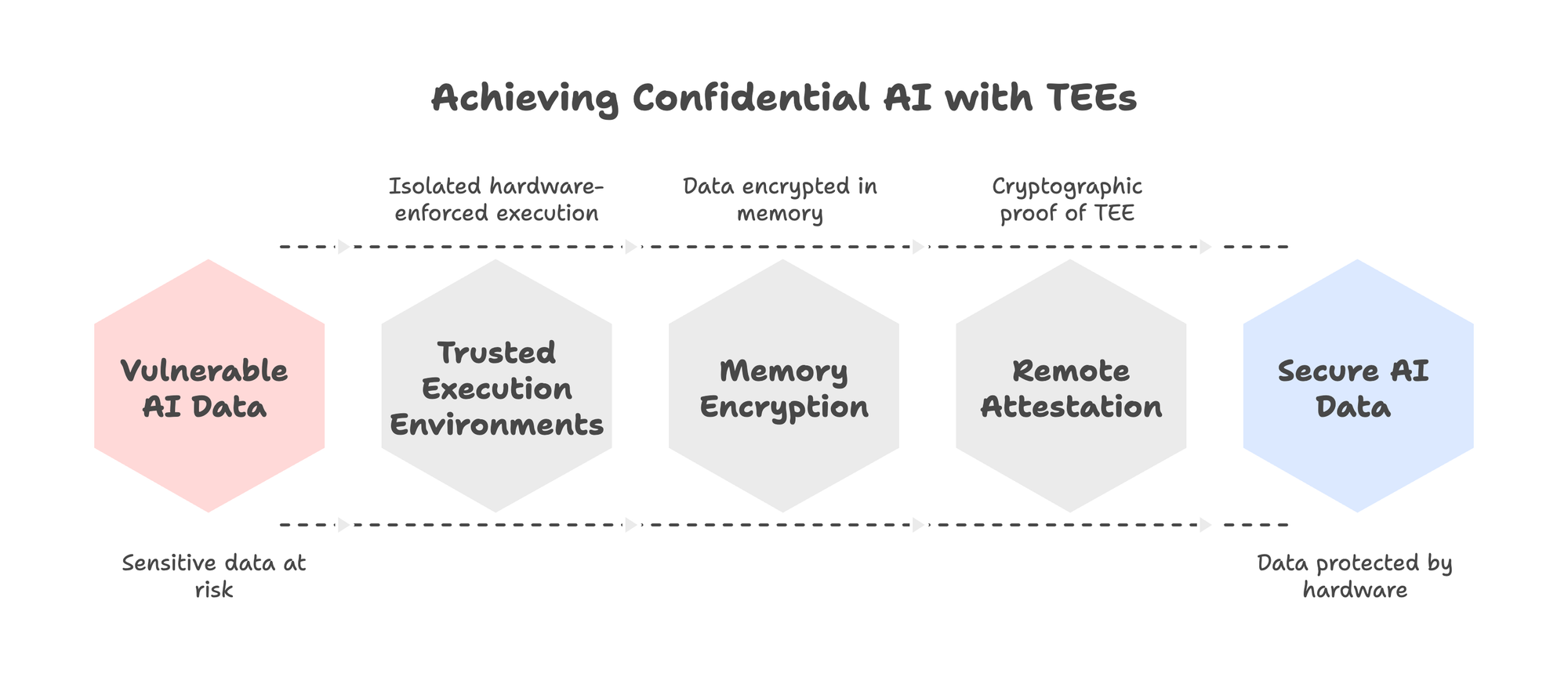

Confidential AI combines three technologies: Trusted Execution Environments, memory encryption engines, and remote attestation. Here’s how they work together.

A. Trusted Execution Environments (TEEs)

A TEE is an isolated region within a processor where sensitive code and data execute. The isolation is hardware-enforced. Even if an attacker controls the operating system or hypervisor, they cannot read or modify what happens inside the TEE.

Different vendors implement TEEs differently:

| Technology | Vendor | Isolation Level | Max Protected Memory |

|---|---|---|---|

| SGX | Intel | Application | Up to 512GB |

| TDX | Intel | Virtual Machine | Full VM memory |

| SEV-SNP | AMD | Virtual Machine | Full VM memory |

| TrustZone | ARM | System-wide | Configurable |

| H100 CC | NVIDIA | GPU workloads | Full GPU memory |

For AI workloads, NVIDIA’s H100 was a breakthrough. Previous TEEs were CPU-only, making them impractical for large language models that require GPU acceleration. The H100 introduced hardware-based confidential computing for GPUs, enabling encrypted inference at near-native speeds.

B. Memory Encryption

Inside a TEE, data remains encrypted in memory using hardware encryption engines. Intel uses AES-XTS with per-enclave keys. AMD uses AES-128 with per-VM keys managed by a dedicated secure processor.

The critical point: encryption keys never exist in software. They’re generated and stored in hardware, accessible only to the encryption engine itself. No API call, no memory dump, no privileged access can extract them.

When data moves between CPU and GPU, it travels through encrypted channels. NVIDIA’s implementation uses AES-GCM-256 for all transfers. A PCIe firewall blocks any attempt to access GPU memory from the CPU side.

C. Remote Attestation

Attestation answers a simple question: How do you know the TEE is real?

Without verification, an attacker could simulate a TEE, claim data is protected, and steal everything. Remote attestation prevents this through cryptographic proof.

The process works like this:

- The TEE generates a signed report containing measurements of its code and configuration

- The signature uses keys embedded in the processor during manufacturing

- A verification service checks the signature against the manufacturer’s certificate chain

- If everything matches, you have mathematical proof the TEE is genuine

This chain of trust extends from the silicon manufacturer through the cloud provider to your application. At each step, cryptographic evidence replaces blind trust.

For enterprises, this means audit logs with hardware-signed attestation. Every inference request can be verified. Every model execution can be proven compliant.

Organizations considering self-hosted AI deployments should evaluate TEE support as a core infrastructure requirement.

Confidential AI vs. Other Privacy Approaches

Confidential AI isn’t the only way to protect sensitive data in AI workflows. But it’s the only approach that combines strong security with production-grade performance.

| Approach | What It Protects Against | Performance Impact | Best Use Case |

|---|---|---|---|

| Confidential Computing | Cloud provider, OS, other tenants, insiders | 5-10% overhead | Production inference, model protection |

| Differential Privacy | Statistical inference, membership attacks | Low-moderate | Large datasets, aggregate analytics |

| Homomorphic Encryption | All parties (computation on encrypted data) | 10,000-100,000x slower | Highest sensitivity, not performance-critical |

| Federated Learning | Centralizing raw data | Network overhead | Distributed data, edge devices |

Differential privacy adds mathematical noise to prevent reconstruction of individual records. Useful for training on sensitive datasets where statistical patterns matter more than individual precision. The trade-off: more privacy means less accuracy.

Homomorphic encryption allows computation on encrypted data without decryption. Theoretically ideal. Practically, it’s 4-5 orders of magnitude slower than plaintext computation. Fine for simple operations. Unusable for LLM inference.

Federated learning keeps data distributed across multiple parties. Models train locally, only gradients are shared. Protects against data centralization but doesn’t protect against compromised participants or gradient inversion attacks.

Confidential computing provides near-native performance with hardware-enforced isolation. You can run full LLM inference, fine-tuning, and training inside a TEE with single-digit percentage overhead.

For most enterprise AI workloads, confidential computing offers the best balance of security and practicality.

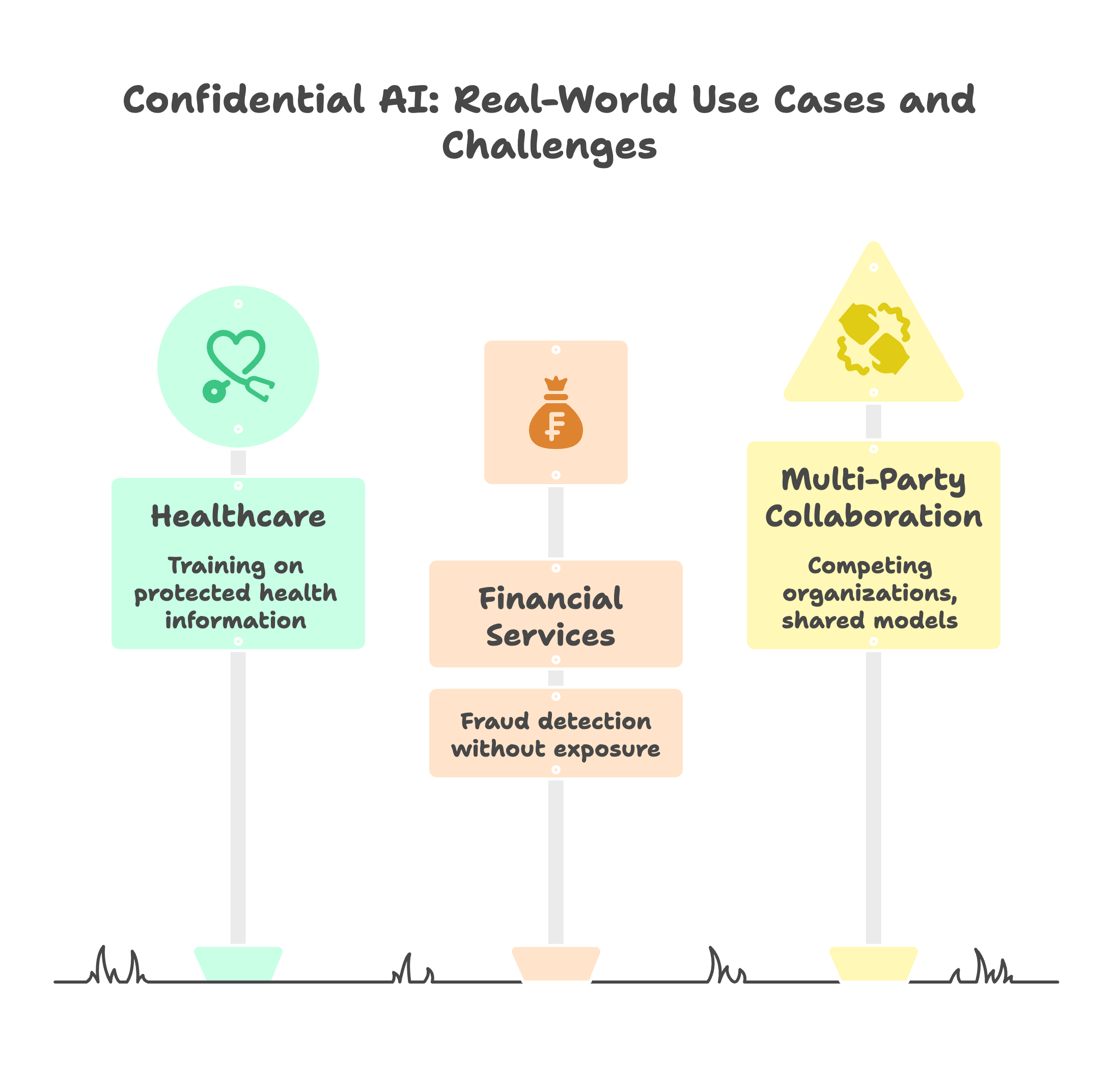

Real-World Use Cases

Confidential AI isn’t theoretical. Organizations across healthcare, finance, and multi-party collaboration are running production workloads today.

1.Healthcare: Training on Protected Health Information

A diagnostic AI company needed to train models on medical imaging data from multiple hospitals. Each hospital’s data was subject to HIPAA. Traditional approaches required complex data sharing agreements and still exposed PHI during processing.

Their solution: confidential computing environments where patient data enters encrypted, model training happens inside a TEE, and no plaintext ever exists outside the secure enclave. The hospitals maintained custody of their data while contributing to a shared model.

Federated learning platforms like Owkin have used similar approaches to develop tumor detection models 50% faster than centralized methods, with zero documented patient data breaches.

For healthcare organizations exploring AI, domain-specific language models trained on protected data become viable when confidential AI eliminates exposure risk.

2.Financial Services: Fraud Detection Without Exposure

Transaction data is among the most sensitive in any organization. Card numbers, account details, behavioral patterns. Exposing this data during AI processing creates massive liability.

Financial institutions are deploying fraud detection models inside TEEs. Transaction streams flow into secure enclaves, pattern analysis happens in encrypted memory, only fraud scores emerge. The underlying data never leaves the protected environment.

One implementation reduced monthly AI infrastructure costs from $48,000 to $32,000 while achieving regulatory compliance for data-in-use protection.

Banks evaluating cloud vs self-hosted AI increasingly find confidential computing enables cloud deployment without sacrificing data control.

3.Multi-Party Collaboration: Competing Organizations, Shared Models

Sometimes the most valuable AI requires data that no single organization possesses. Anti-money laundering models work better when they see transaction patterns across multiple banks. Drug discovery accelerates when pharmaceutical companies pool research data.

But competitors sharing raw data? That doesn’t happen.

Confidential AI enables a middle path. Multiple parties contribute encrypted data to a shared TEE. Models train on the combined dataset. Each party receives model outputs, but no party ever sees another’s raw data.

The technology exists. The regulatory frameworks are catching up.

Compliance Implications

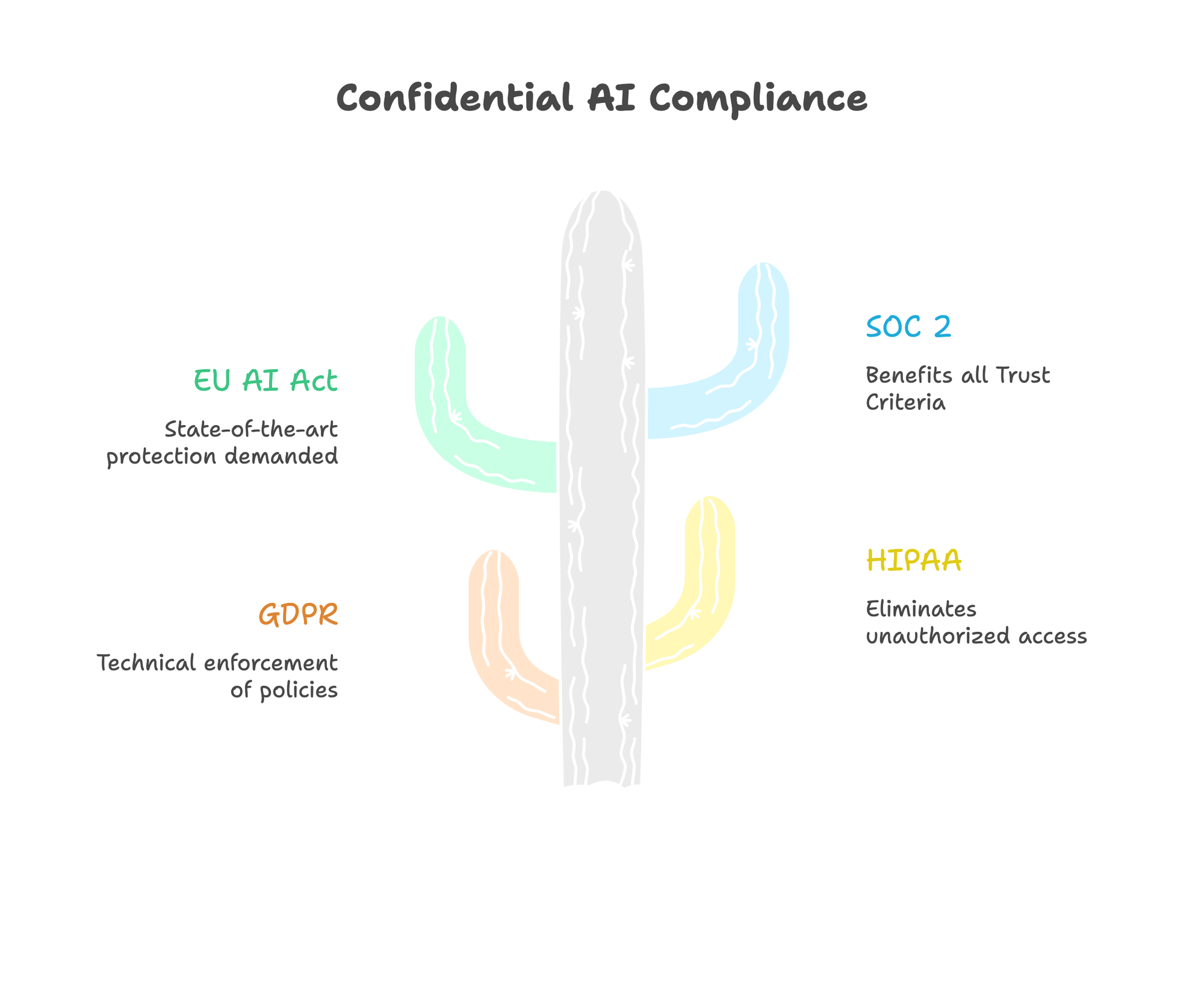

Confidential AI doesn’t just improve security posture. It fundamentally changes what’s possible under regulatory constraints.

GDPR

Article 32 requires “appropriate technical measures” for data protection. Confidential computing provides technical enforcement of data handling policies, not just procedural controls.

More significantly, confidential AI may enable compliant cross-border data transfers. If data remains encrypted during processing and the cloud provider cannot access plaintext, traditional jurisdiction concerns become less relevant. The Future of Privacy Forum has published research exploring these implications.

With EUR 6.7 billion in GDPR fines issued through 2025, technical compliance measures are no longer optional.

Organizations building GDPR-compliant AI chat systems should evaluate confidential computing as a foundational architecture decision.

HIPAA

The Security Rule requires technical safeguards for protected health information. Confidential computing exceeds these requirements by eliminating the possibility of unauthorized access during computation.

For healthcare AI deployments, this means training models on PHI without exposing it to cloud infrastructure. The data exists only in encrypted memory. Even a complete infrastructure breach reveals nothing.

EU AI Act

The EU AI Act takes effect in August 2026. Article 78 requires confidentiality of information and adequate cybersecurity measures. High-risk AI systems face additional requirements for data protection impact assessments with technical enforcement.

Confidential computing provides exactly the kind of “state-of-the-art” protection the regulation demands. Organizations deploying high-risk AI systems should evaluate confidential computing as part of their compliance strategy now, not after enforcement begins.

SOC 2

All five Trust Service Criteria benefit from confidential computing:

- Security: Hardware-enforced isolation prevents unauthorized access

- Availability: Protection against privileged user attacks

- Processing Integrity: Cryptographic attestation verifies correct execution

- Confidentiality: Data encrypted even during computation

- Privacy: Technical enforcement of data handling policies

For enterprises pursuing SOC 2 certification, confidential AI implementations demonstrate security controls that exceed typical cloud deployments. Understanding what SOC 2 certification misses about AI security helps teams build comprehensive protection.

Performance: The Honest Picture

Early confidential computing carried significant performance penalties. That’s changed dramatically, but trade-offs remain.

Current Performance Numbers

Single GPU inference (NVIDIA H100):

- Typical LLM queries: under 5% overhead

- Larger models and longer sequences: approaches zero overhead

- All performance counters disabled in confidential mode to prevent side-channel attacks

CPU-based TEEs (Intel TDX, AMD SEV-SNP):

- Throughput impact: under 10%

- Latency impact: around 20%

- Modern implementations show single-digit percentage differences for most workloads

Where Overhead Increases

Multi-GPU training remains challenging. Data moving between GPUs requires encryption and decryption at each transfer. For training workloads spanning multiple GPUs, overhead can reach 768% on average, with worst cases exceeding 4000%.

The bottleneck isn’t computation. It’s data movement. When model weights swap between CPU and GPU memory, each transfer requires encryption. The CPU becomes the bottleneck, leaving GPUs idle.

Practical implication: Confidential AI works well for inference and single-GPU fine-tuning. Large-scale distributed training requires architectural optimization or acceptance of significant overhead.

Teams exploring data distillation can reduce model sizes significantly, making confidential AI deployment more practical for edge and resource-constrained environments.

Memory Considerations

TEEs have memory limits. Intel SGX originally capped enclaves at 256MB. Modern implementations support up to 512GB per socket. For large language models, memory planning matters.

Self-hosted LLM deployments should account for memory overhead when sizing infrastructure. Allocate 10-15% additional memory headroom for security operations.

What to Look For in a Confidential AI Platform

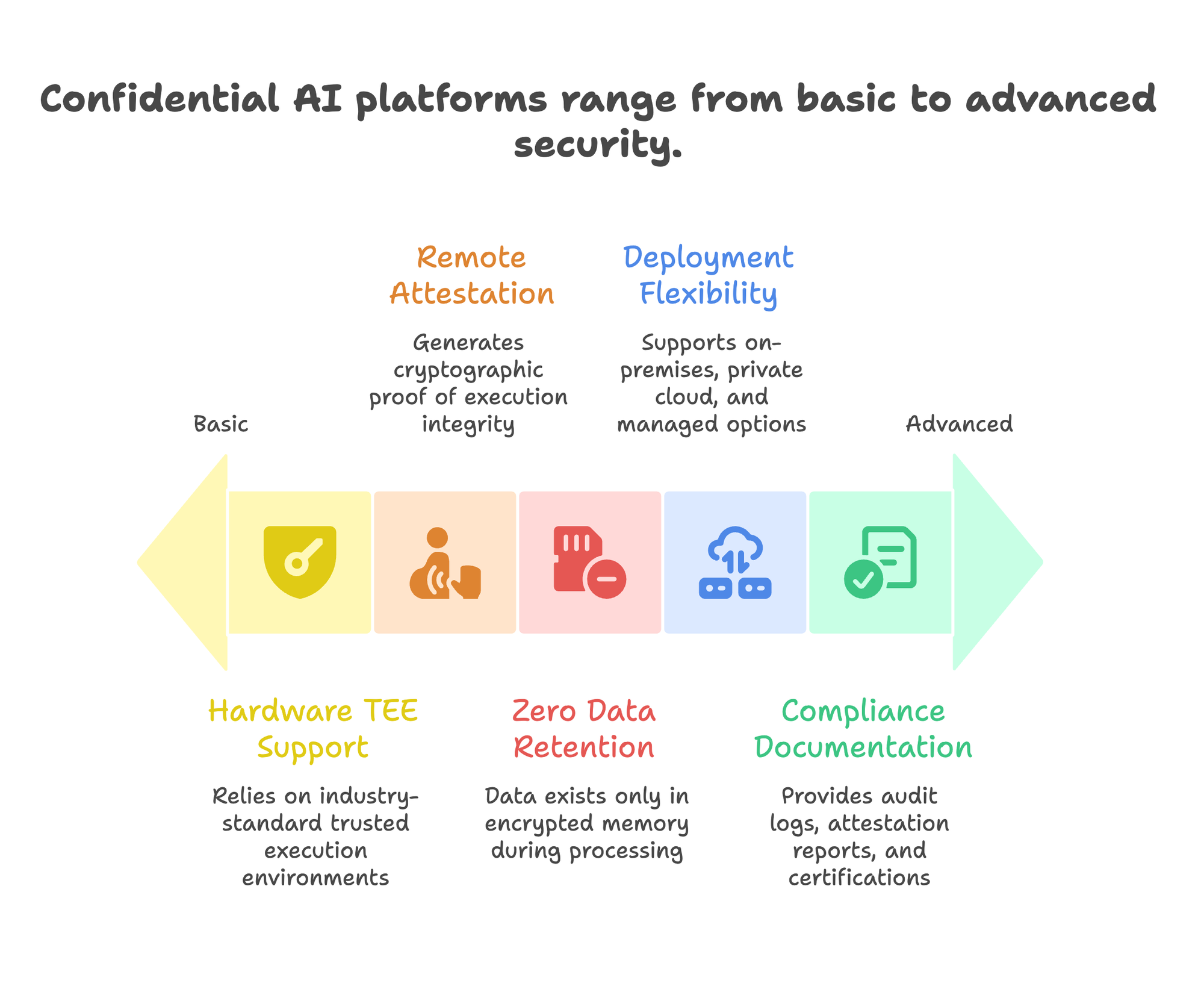

If you’re evaluating confidential AI solutions, these capabilities matter:

1/ Hardware TEE Support

The platform should support industry-standard TEEs: Intel TDX, AMD SEV-SNP, or NVIDIA H100 confidential computing. Proprietary isolation mechanisms lack the verification ecosystem that makes attestation meaningful.

2/ Remote Attestation

Every inference should generate cryptographic proof of execution environment integrity. Look for hardware-signed attestation, not just software assertions. The attestation chain should be independently verifiable.

3/ Zero Data Retention

Stateless architecture matters. Data should exist only in encrypted memory during processing, with nothing persisting after inference completes. This simplifies compliance and reduces breach impact.

4/ Deployment Flexibility

Different workloads have different requirements. A platform should support on-premises deployment for highest sensitivity, private cloud for scalability, and managed options for teams without dedicated infrastructure expertise.

For organizations requiring complete network isolation, air-gapped AI solutions provide the ultimate protection layer.

5/ Compliance Documentation

GDPR, HIPAA, SOC 2, and ISO 27001 certifications demonstrate organizational commitment to security. But certifications alone aren’t enough. The platform should provide audit logs, attestation reports, and compliance documentation specific to confidential computing.

Prem’s architecture addresses these requirements with TEE integration, encrypted inference delivering under 8% overhead, and hardware-signed attestation for every interaction. Data exists only in encrypted memory during processing. Swiss jurisdiction adds legal protection to technical guarantees.

For teams comparing options, understanding how PremAI compares to Azure alternatives provides useful context for evaluating data sovereignty approaches.

Frequently Asked Questions

What’s the difference between confidential AI and private AI?

Private AI focuses on data custody and deployment location. Your data stays in your environment. Confidential AI adds hardware-based protection during processing. Data remains encrypted even while being computed on. Private AI is about where data lives. Confidential AI is about how it’s protected everywhere, including during use.

Does confidential AI work with all AI models?

Most modern LLMs run efficiently in confidential environments. The NVIDIA H100 supports confidential computing for GPU workloads with minimal overhead. CPU-based models work across Intel and AMD TEE implementations. The main constraint is memory: very large models may require careful infrastructure planning.

How much does confidential AI slow down inference?

For typical LLM inference on H100 GPUs, overhead is under 5%. CPU-based TEEs add roughly 10% throughput impact. Multi-GPU training carries higher overhead due to encrypted data transfers. For most production inference workloads, the performance impact is negligible.

Can cloud providers access my data in a confidential AI environment?

No. That’s the point. Data remains encrypted in memory, with keys inaccessible to any software layer. Even with full administrative access to the underlying infrastructure, the cloud provider sees only encrypted data. Remote attestation provides cryptographic proof of this isolation.

Is confidential AI required for GDPR compliance?

Not explicitly required, but increasingly relevant. GDPR mandates “appropriate technical measures.” Confidential computing provides stronger technical guarantees than traditional encryption for data processing. As enforcement intensifies and fines accumulate, technical controls that exceed minimum requirements become strategically valuable.

What hardware is needed for confidential AI?

Modern Intel processors with TDX, AMD EPYC with SEV-SNP, or NVIDIA H100/H200 GPUs support confidential computing. Major cloud providers offer confidential VM instances. For on-premises deployment, ensure your hardware supports the appropriate TEE technology.

The Bottom Line

Traditional encryption protects data everywhere except where it’s most vulnerable: during processing.

For 70%+ of enterprise AI workloads involving sensitive data, that gap is indefensible. Regulations are tightening. Attack surfaces are expanding. The cost of breaches keeps climbing.

Confidential AI closes the gap with hardware-enforced isolation, encrypted memory, and cryptographic attestation. The technology has matured. Performance overhead is now single-digit percentages. Cloud providers and hardware vendors have aligned behind common standards.

The question isn’t whether confidential AI will become standard for sensitive workloads. It’s whether your organization adopts it before or after a breach forces the issue.

Explore how Prem’s confidential AI infrastructure protects enterprise data at every layer. Or book a demo to see encrypted inference in action.